Who Gets to Decide AGI’s Ethics?

Why the answer cannot be a small room of researchers!

In most current approaches, a small team of researchers and ethicists makes that decision for everyone. These are often thoughtful, well-intentioned people. But should ANY small group be trusted to determine the ethical foundations of a technology that will eventually affect 8.3 billion humans?

Our architecture takes a different approach.

It aggregates the ethical values of millions of individual contributors into a collective model through a transparent, auditable process.

Here is how it works.

During the customization process described in Post 4 (Your AI Should Think Like You), ethical knowledge is captured in the same way as domain knowledge. When an AI agent’s owner corrects his AI agent’s outputs, specifies his preference for Fair Trade cafes, or answers questions about his values, those judgments become part of his agent’s ethical profile alongside his factual knowledge. Every customized AI agent on the network carries its owner’s ethical values as a built-in part of its training.

When the Integration subsystem aggregates individual agent datasets into a collective model, it aggregates ethical information alongside factual and procedural knowledge.

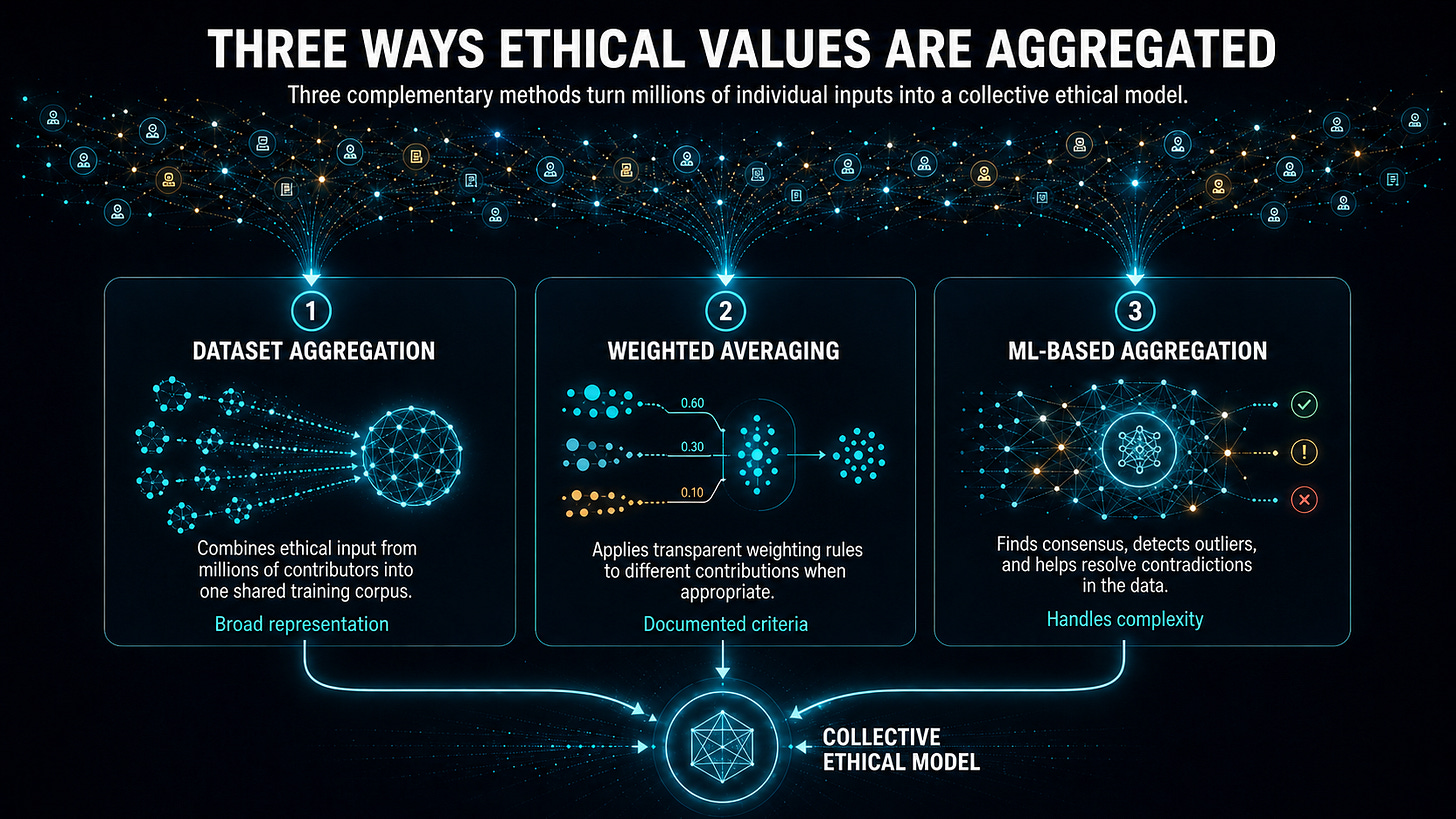

This happens through three methods:

Dataset aggregation. All ethical data from millions of humans is combined into a collective corpus, and the integrated system is trained on it. The majority views across millions of contributors naturally carry more weight than minority views, creating a form of implicit democratic representation without any single authority deciding whose values count more.

Weighted averaging. Individual ethical contributions can be assigned explicit weights based on documented criteria. Contributors with a demonstrated ethical track record across many network interactions might (optionally) carry more weight on contested ethical questions in their domains of expertise. The weighting methodology is documented and transparent, allowing the community to audit it and set the rules.

Machine learning-based aggregation. More sophisticated methods identify consensus positions across contributors, detect outliers, and resolve apparent contradictions in ways that reflect deeper patterns in the ethical training data that can supplement the majority positions.

Voting extends this democratic participation further. Users can vote on specific ethical questions that affect how the AGI behaves. For example, humans can vote on which philosophical frameworks should carry more weight in contested situations, how the system should behave when competing values conflict, and what categories of decisions should always require human authorization. These mechanisms help the community shape the AGI system’s values.

There is a philosophical point here that matters. The philosopher, David Hume, explained as early as 1776 that there is no way to derive values logically from first principles. More recently, this point was amplified by the Nobel Laureate and AI pioneer Herbert A. Simon, in his book Reason in Human Affairs.

Even an intelligence far more capable than any human cannot reason its way to values. It must get them from some source other than logical reasoning. Values that emerge from a diverse, democratic process have a legitimacy that values imposed by any small group cannot.

The safeguard against ethical going wrong is transparency.

The methodology for aggregating ethics must be documented so contributors can understand how their input affects the AGI’s collective values. When the aggregated ethics produce problematic results, the methodology can be examined and corrected. Accountability must be built into the architecture, not added as an afterthought.

Properly designed, the result is an AGI whose ethics are not simply programmed in. They grow from millions of real human contributions, are refined through interaction, and are continuously updated as the contributor base evolves. This is one of the few approaches to AI alignment that does not require trusting that the people in charge of training are well-intentioned and also able to determine the right values for everyone.

In the next post, we look at the Improvement subsystem, and the key constraint that governs every upgrade the system makes.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see how it all works.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.