Why Everyone Is Solving the Wrong Problem

The flaw that bigger models cannot fix

What if every major AI lab in the world is spending hundreds of billions of dollars solving the wrong problem?

Microsoft, Google, Meta, and every other major AI lab are working on the same challenge: how do you build a single AI system smart enough to match human general intelligence? They are spending hundreds of billions of dollars on this question. They are hiring the best researchers in the world. And they are solving the wrong problem.

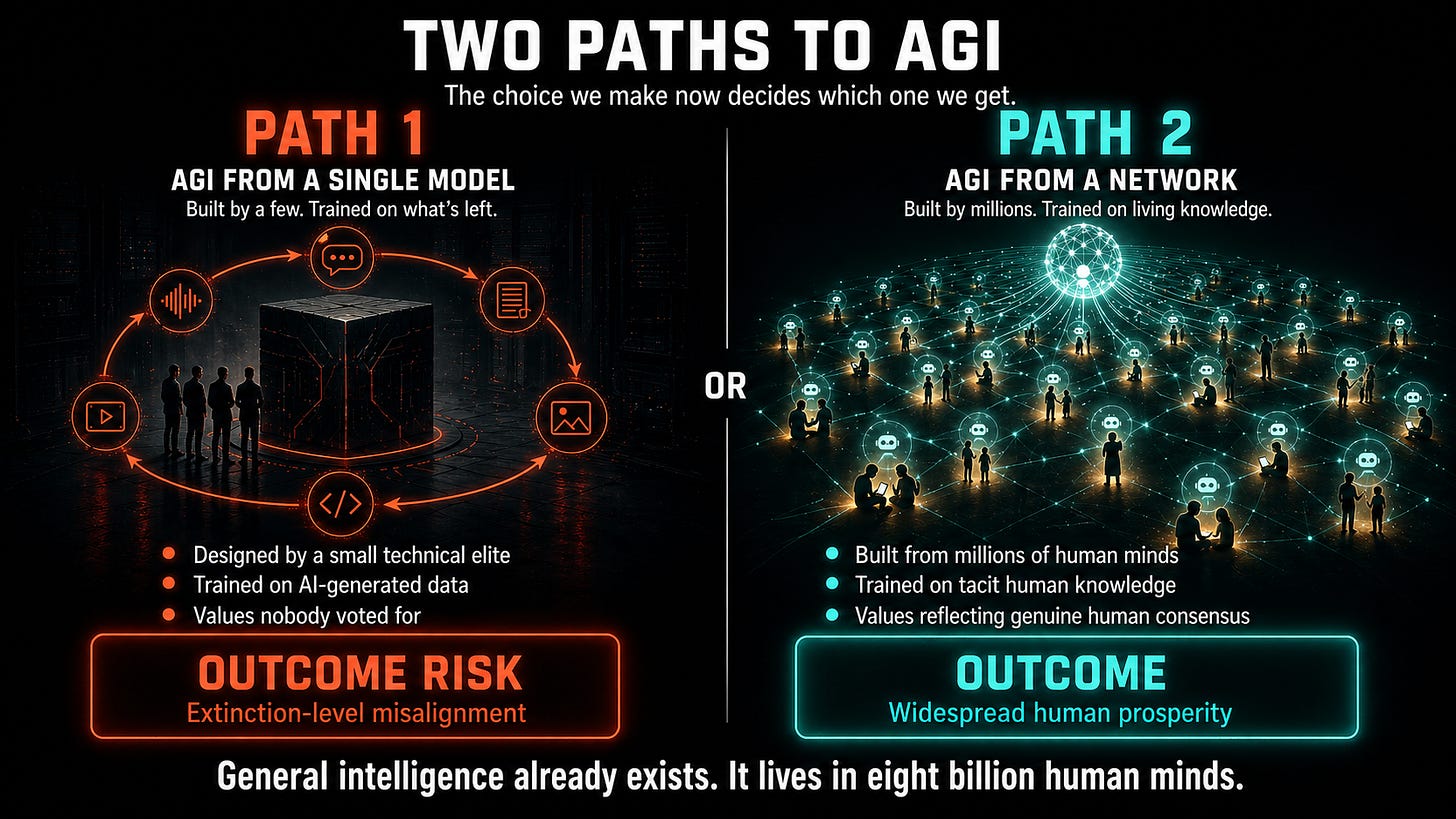

Here is why that matters to you. The answer will determine what kind of Artificial General Intelligence (AGI) emerges. If the leading labs succeed on their current path, we get AGI designed by a small technical elite, trained on data increasingly generated by AIs, and aligned to values nobody voted for. If a different, new approach wins, we get an AGI whose knowledge comes from millions of human minds and whose values reflect a consensus closer to genuine human values. The difference between those two outcomes could be the difference between widespread human prosperity and total human extinction.

The core assumption driving every major AI lab right now is that a single AGI can be created from scratch with enough computation. Train a large enough model on enough data, and researchers assume that AGI will emerge. Acting on this assumption has produced impressive results so far. But it has a fundamental flaw: the most valuable human data is very difficult to capture through mining text or by memorizing information already on the internet.

General intelligence already exists. It’s distributed across eight billion human minds, each carrying unique knowledge, expertise, and judgment accumulated over a lifetime. The surgeon who knows from feel alone when something is wrong. The teacher who understands exactly why a particular student is struggling. The engineer who can diagnose a failing system from a sound. This kind of knowledge can be gathered more effectively by involving humans in active problem-solving rather than by passively scraping data already available online. The data that would make AI truly general, the tacit knowledge, the hard-won judgment, the ethical intuitions shaped by real experience, is precisely the data that existing approaches are not designed to reach.

Imagine trying to train a world-class expert solely through published articles. You’d get a lot of content. You’d miss much of what actually matters: the knowledge that experts carry in their heads and have never bothered to write down because it seemed too obvious, too contextual, or too difficult to explain.

The answer to this problem is not to build a single super-smart AI. We need to build a smarter system that combines human and AI intelligence. AGI shouldn’t be created separately from humans. It should arise from an organized network of humans and AIs.

Instead of trying to recreate human intelligence in a single AI, we should create the infrastructure that enables millions of human minds to communicate their knowledge, values, and judgments directly to millions of AIs that represent them.

In the next post, I’ll explain why the fastest path to AGI and the safest path turn out to be the same path, and why that follows directly from the argument above.

The architecture behind this goes much deeper. Read White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see exactly how it all works: superintelligence.com/whitepaper1-aaai-systems-methods.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is actually heading, send this to them.