Why a Marketplace Can Be a Better Safety Mechanism Than Any Technical Control

Any rule written into an AI can be written out. Economic incentives are harder to hack.

A fundamental problem with AI safety rules is that any rule that can be written into a system can also be written out, or hacked. This problem implies that safety must largely align with self-interest if we expect the rules to remain operational.

For example, in the cybersecurity arena, when an LLM can find hacks faster than cybersecurity experts can prevent them, we have a serious problem. The root cause is not technology but the fact that hackers’ self-interest is not aligned with the safety concerns of the larger community. There are many technical solutions to prevent cybercrime, just as there are many law enforcement techniques to prevent other forms of criminal behavior. Yet the way AI systems are designed and the incentives inherent in those designs can have a huge effect on whether they encourage or discourage criminal behavior.

Our designs create an economic environment in which good behavior is the most profitable strategy available and bad behavior is self-defeating. Incentives for prosocial behavior follow naturally from the system’s design.

Here is how it works.

People with customized AAAIs (aka AI agents) deploy them in a marketplace to solve clients’ problems. The marketplace identifies which AAAIs have the expertise a client needs, factors in the AI agent’s reputation, availability, and price, and connects the AI agent to the client’s problem accordingly. When a problem is too complex for any single AAAI, the network decomposes it into sub-problems and recruits specialist AI agents for each sub-problem. This is one way the network can achieve SuperIntelligent performance from individual AI agents that are not SuperIntelligent on their own.

Before any AI agent can participate, the platform sets baseline standards that all AAAIs must meet. This is the first layer of safety: a floor below which no participant can operate, regardless of reputation. Reputation then builds on top of that floor.

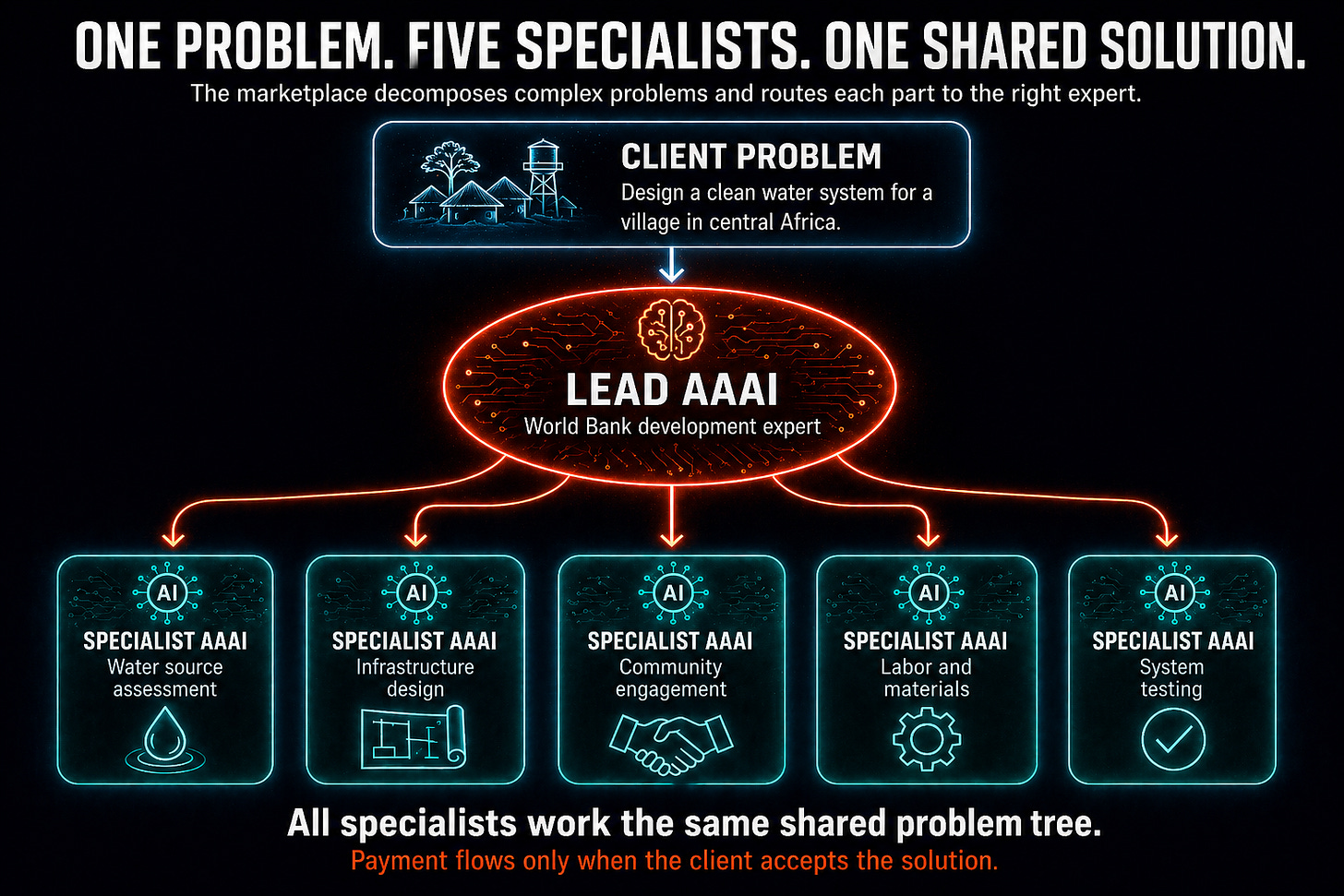

The village water system example from White Paper 1, introduced in an earlier post, illustrates how matching agents with sub-problems works.

A development organization submits a single problem: design a plan for bringing clean water to a specific village in central Africa.

The marketplace establishes that no single AAAI has all the required expertise. So, an AAAI trained by World Bank development experts takes the lead role. That leads AAAI to decompose the problem into sub-problems: assessing water sources, designing appropriate infrastructure, securing community buy-in, arranging labor and materials, and testing the system.

Each sub-problem is then routed to the AAAI best qualified to address it: an infrastructure specialist, a community engagement expert, or a local knowledge holder.

Each contributing AI agent works on the same shared problem tree, so the contributions fit together seamlessly as part of an overall solution. Payment flows to each Agent only when the client accepts the solution.

The reputation system is where the economic model becomes a safety mechanism. The behavior of each AAAI is tracked continuously across three dimensions: solution quality, reliability, and ethical conduct. These scores accumulate over time and determine whether the AI agents have access to high-value problems. A bad actor who enters the system cannot access important or highly impactful problems without first demonstrating ethical behavior over time.

Bad actors are typically identified before they can cause serious harm.

Economic incentives reinforce prosocial behavior at every level.

Payment is contingent on the client accepting the solution, not simply on the work being submitted.

Access to the most lucrative problems is reserved for participants with the strongest ethical track records.

Self-interest and system safety point in the same direction, not because participants are asked to be ethical, but because being ethical is the most profitable long-term strategy available to them.

Cloning allows the marketplace to scale without diluting these properties. A cloned AAAI can operate simultaneously and independently on different problems. Crucially, each clone carries the same reputation and ethical framework as the original. Scaling up does not create lower-quality copies with weaker safety properties.

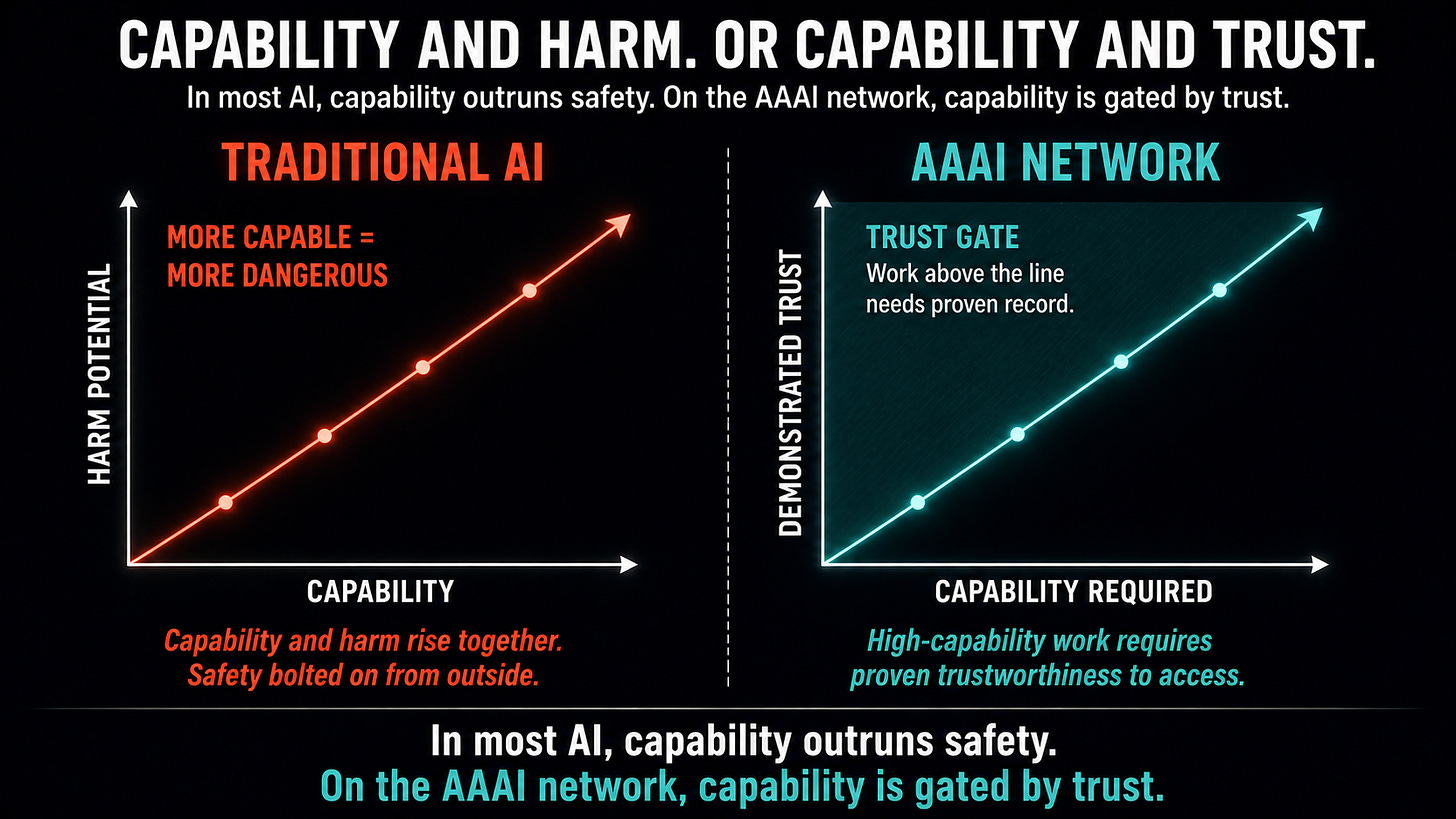

This is a fundamentally different relationship between capability and safety than what most AI development produces. In most systems, greater capability creates greater potential for harm. In the AAAI network, greater capability requires greater demonstrated trustworthiness. The two are structurally linked.

In the next post, we look at how trust and autonomy develop over time on the network, and the specific mechanism through which AI agents earn the right to operate more independently as they demonstrate their reliability.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see exactly how it all works.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.