The Key Rule That Every Upgrade Must Follow

What happens to safety as AI systems get smarter?

History suggests that safety mechanisms designed for a given level of capability do not necessarily work for systems that are much more capable. For example, financial rules designed for days when humans did trading in a trading pit had to be modified with the advent of electronic trading. Similarly, in aviation, when air traffic increased from the levels in the early “barnstorming” era, new regulations and systems were required to ensure air safety.

The question for AI is whether the same pattern applies – and whether it is possible to design a system where safety improves at the same speed that capability grows rather than lagging behind it.

Such a design is possible.

But for it to be successful, the design must follow a key rule: every improvement must maintain or increase human safety. A system change that reduces ethical oversight typically would not qualify. Nor would an upgrade that concentrates control in fewer hands, or that allows safety constraints to be relaxed under competitive pressure.

To succeed, the rule should be built into the architecture itself, not tacked on as a policy that might be changed later.

Here is an example of how this rule is embedded in our architecture:

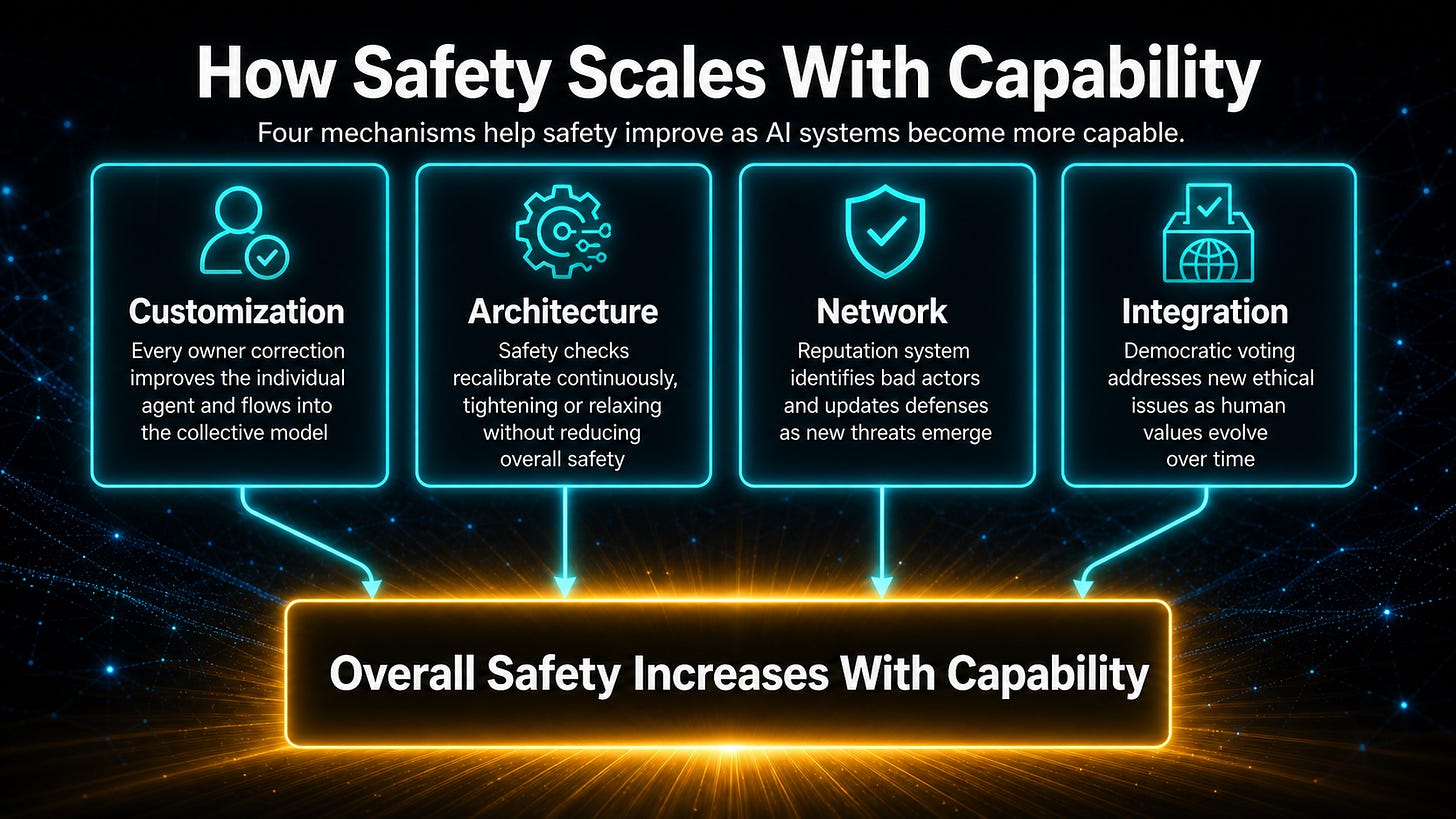

Customization. When an Agent’s owner corrects an ethical error, that correction improves the individual AI agent’s judgment. Through integration, the correction also flows into the collective model, refining the ethical standards that govern AGI behavior across the network. Every correction made by any owner anywhere on the network makes the system slightly more ethically calibrated.

Architecture. The problem-solving framework can recalibrate through experience. If certain safety checks prove too restrictive in some domains, they can be relaxed provided they do not decrease overall safety. If others prove too permissive, they can be tightened. The safety mechanisms are dynamic and self-optimizing.

Network. The reputation system learns to identify problematic participants more accurately over time. When bad actors find new ways to abuse the system, patterns are recognized, and defenses are updated. Safety mechanisms learn to become more accurate and effective at detecting new types of threats as they emerge.

Integration. Voting and democratic participation enable the contributor community to address new ethical issues as they arise. The ethical foundation evolves with human understanding and with changes in human ethics over time.

Another important principle is to avoid unrecoverable outcomes.

Decisions that cannot be reversed if they prove mistaken should be treated as categorically different from decisions that can be corrected. Our architecture prioritizes avoiding catastrophic scenarios over maximizing short-term capability.

Competitive pressure often tempts companies to move faster, deploy sooner, and address safety concerns later.

Our improvement architecture removes that temptation structurally. Capability and safety improve through the same mechanisms. You cannot accelerate one without accelerating the other. For example, as more agents are added, the system’s values become MORE representative of the human population. As more problems are solved, the system learns what it means to solve problems in ethical ways approved by humans. As the system’s speed and capability increase, the frequency and speed of ethical checks also increase, as they are built into the thinking cycle used to solve each problem.

Whether our architecture is adopted or not, advanced AI must be developed so that safety is built into the architecture.

As the system’s capability increases, the safety checks MUST increase automatically and proportionally to maintain or increase the overall safety level. This feature should be considered a hard design constraint for every developer working on advanced AI systems.

In the next post, we step back from the subsystems and ask what the world looks like when this architecture is operating at scale.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see how it all works.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.