The Fastest Path Is the Safest Path

The trade-off that doesn't have to exist

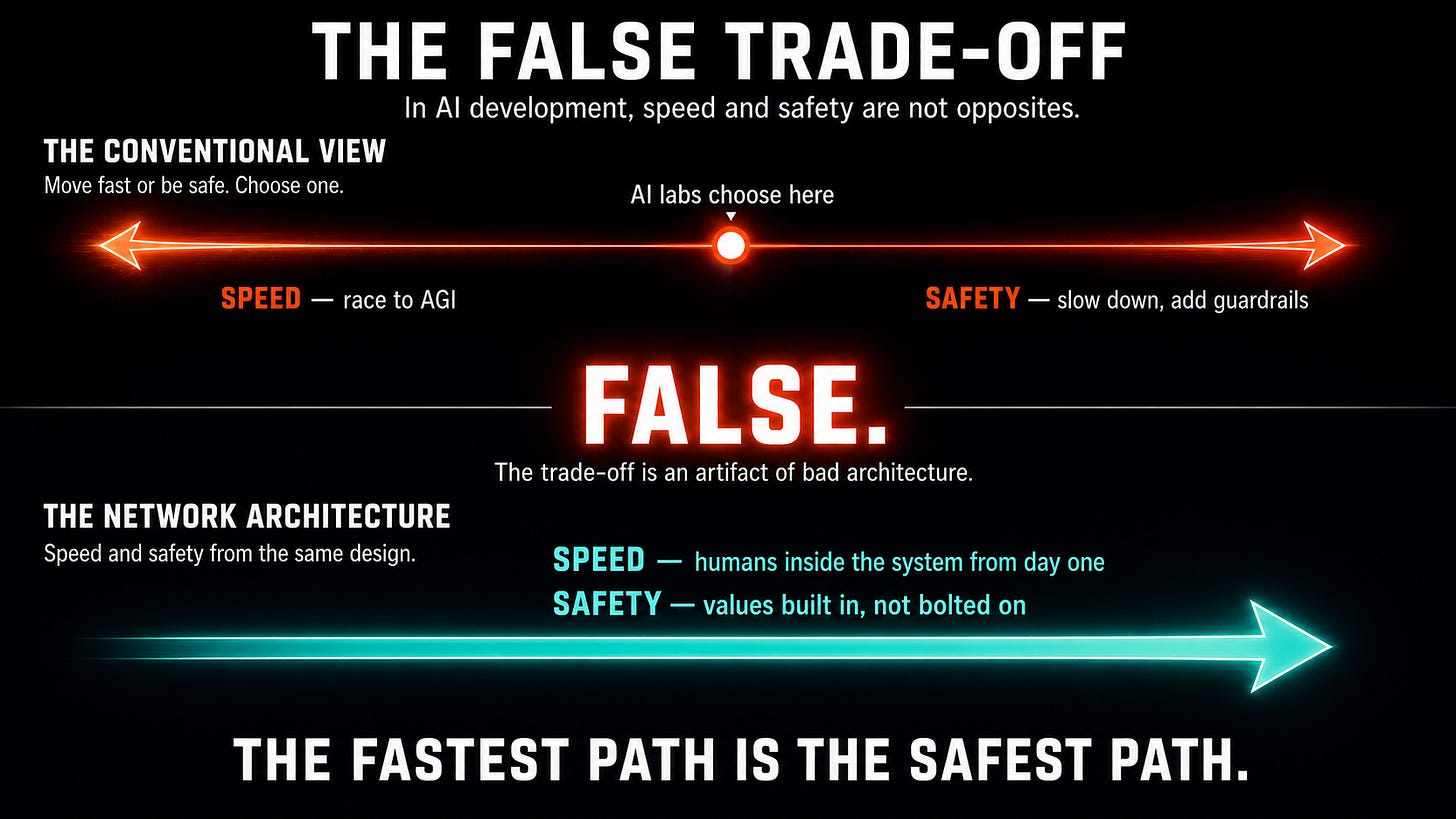

Every conversation about AI development eventually lands on the same assumed trade-off: you can move fast, or you can be safe. The labs racing to build AGI implicitly accept this trade-off. The safety researchers trying to slow the AI arms race accept it, too. Almost nobody questions whether the trade-off is real.

It isn’t. Once one realizes that the safest solution can also be the fastest solution, the entire framing of the AI race changes. We don’t have to choose between getting there first and getting there responsibly. The responsible path can also get us there first.

Here’s why.

Current AI development is both slow and unsafe because it excludes most human intelligence and values from the system. AI training scrapes publicly available data but misses the knowledge locked in human minds. Safety mechanisms are tacked on after the fact rather than being built into human values from the foundation.

These two shortcomings share a single cause. The first is a data problem: AI systems trained only on what humans have already written down cannot access the tacit knowledge, judgment, and expertise that exist only in human minds. The second is a safety problem: when human values are not built into the system from the beginning, they have to be engineered in afterward, and that never works as well. Both stem from the same architectural decision: keeping humans outside the system rather than at its center.

Now flip that decision. Put millions of humans inside the system from the beginning. Ask them to customize AI agents with their own knowledge, expertise, and values. Let those agents collaborate on a shared network and earn compensation for solving real problems. Aggregate their contributions into a collective intelligence that no individual AI system could achieve alone.

Using this approach, progress towards AGI will accelerate dramatically. You’re no longer trying to create general intelligence in a single AI that is separate from humans. You’re directly accessing the expertise that already exists in human minds, exactly when it is needed. The most valuable training data in the world, the stuff that never got written down, suddenly flows into the system through millions of human-AI interactions. The AI agents in the system learn from humans so that next time they can solve similar problems on their own. The overall system is capable of AGI from day one because humans are there to fill in the gaps that AI agents don’t understand. But over time, as AI agents learn both knowledge and ethics from humans, they can do more of the AGI’s thinking independently.

Safety improves for the same structural reason. Every AI agent learns and carries the values of its human owner. When millions of agents interact on the network, their collective ethical judgments form a shared set of values that reflects genuine human diversity rather than the preferences of any small group. This is not a safety layer tacked on after the fact. It is an inherent property of a system built around human participation. The requirement for human participation ensures that human values are always incorporated into the system.

Some argue the AI race could be slowed or stopped through regulation or international agreement. While debatable, the scale of investment by countries and companies makes a slowdown unlikely. In 2017, Putin stated that whoever leads in AI will rule the world. Investment from both governments and private companies reflects that same belief. But the race can be redirected toward an approach in which winning and safety are features of the same underlying system design.

The preferred design enables many humans and AI agents to collaborate on a network, resulting in a safer, more transparent, more powerful, and more democratic form of AGI.

In the next post, we get into the specifics of the design. We’ll start with the Customization subsystem, which shows how an ordinary person transforms a generic LLM into a personalized agent that knows what they know, communicates how they communicate, and holds the values they hold.

The architecture behind this goes much deeper. Read White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see exactly how it all works: superintelligence.com/whitepaper1-aaai-systems-methods.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is actually heading, send this to them.