The 1972 Breakthrough Nobody Applied to AI

Why did the AI industry spend fifty years building in the wrong direction?

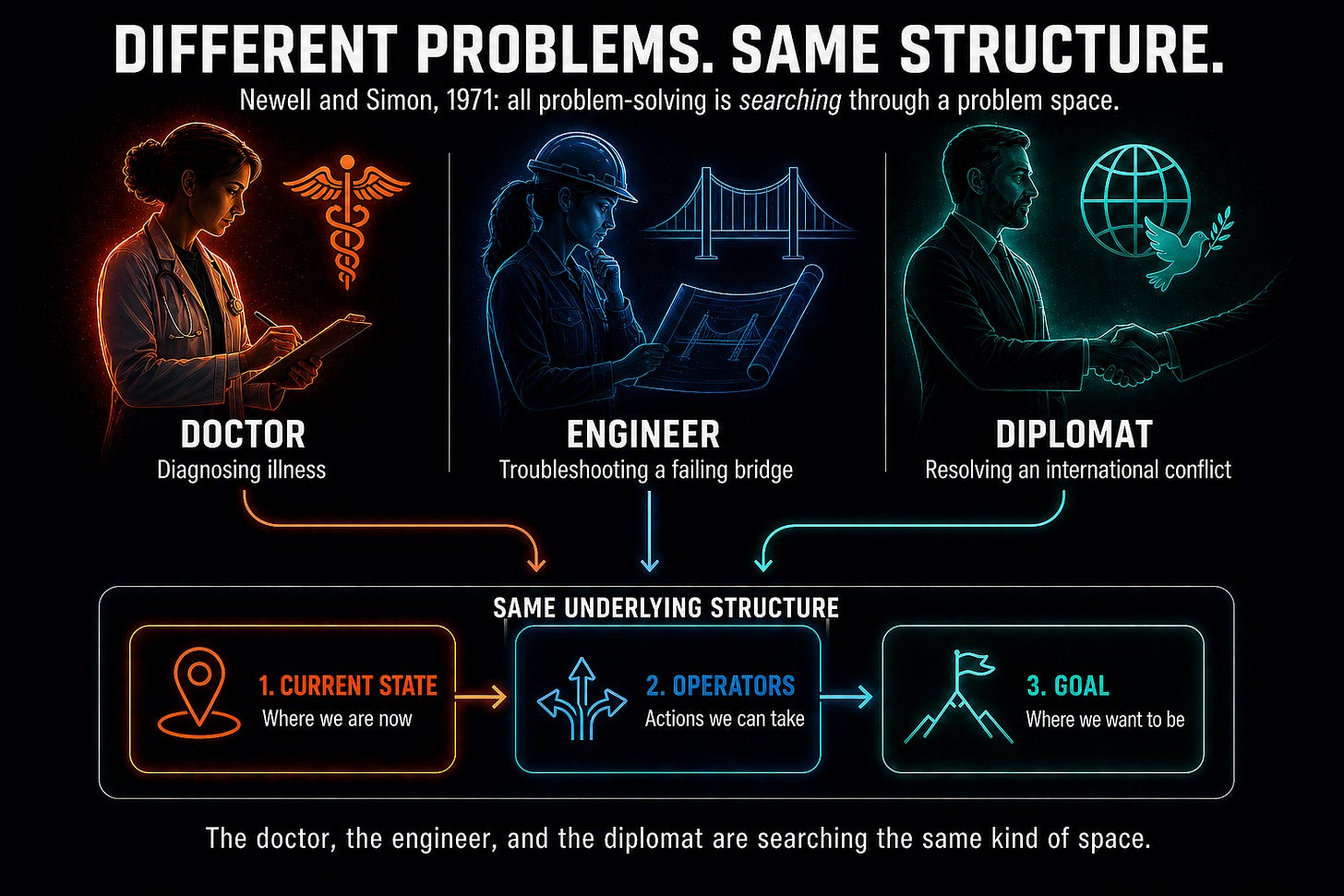

In 1971, two researchers at Carnegie Mellon published one of the most important books in the history of cognitive science. Allen Newell and Herbert Simon spent years studying how humans solve problems. They asked people to think out loud as they worked through chess puzzles, logic problems, and real-world challenges. Their landmark book, Human Problem Solving, documented their discovery that all problem-solving, regardless of domain, follows the same underlying structure.

Every problem, from finding a word in a dictionary to designing a water treatment system for a village in central Africa, can be understood by what Newell and Simon called search through a “problem space.”

A problem space has three components:

The possible states a problem can occupy

The actions that can move it from one state to another.

The goal states that corresponds to solutions

A doctor diagnosing an illness is searching a problem space. So is an engineer troubleshooting a failing bridge. So is a diplomat navigating a geopolitical crisis. The domains may be completely different, but the underlying problem-solving process is the same.

The AI field largely ignored this research during the period from 1990 to 2022, when the neural network approach to machine learning became dominant. Instead of building reasoning systems that solve problems the way humans do, researchers pursued a different idea: build a black-box system that memorizes or predicts solutions based on huge amounts of training data. Only recently have reasoning systems come back into vogue.

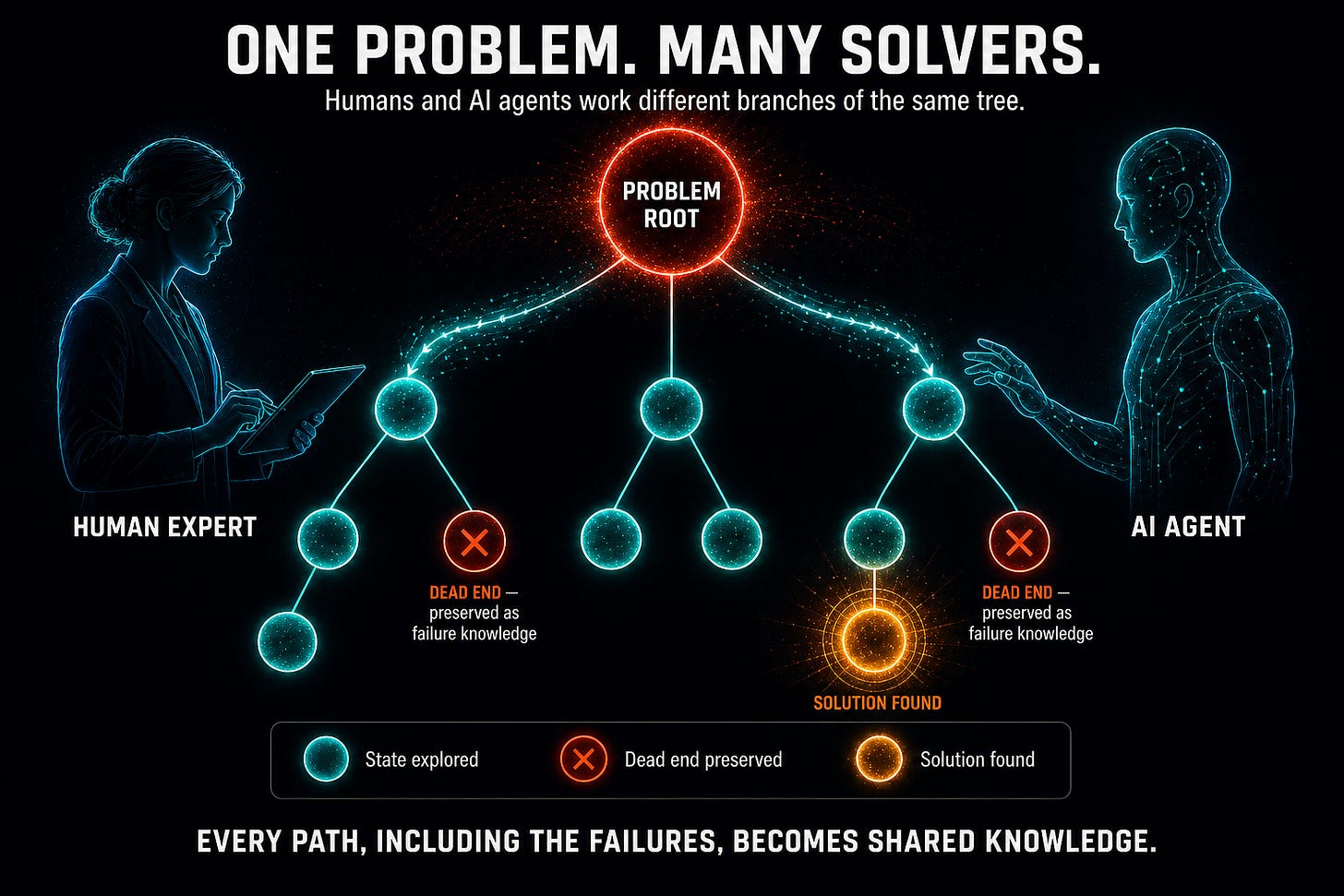

As AI researchers began to place greater emphasis on reasoning and problem-solving, Newell and Simon’s architecture for general problem-solving was rediscovered. It turns out that AI agents can use the same approach that humans use to solve problems. This means that a network can be constructed in which human specialists and AI agents work on the same problem very effectively. In fact, the collaboration is so effective that a network of these “intelligent entities” can achieve SuperIntelligence – a level of intelligence far greater than either humans or AIs can achieve on their own.

In the AAAI Architecture subsystem, described more extensively in our white papers, each Advanced Autonomous AI agent uses an architecture inspired by the Newell and Simon framework. When a problem is submitted to the network, it is represented as a problem tree: a structured record of states visited, actions tried, dead ends encountered, and successful paths found. This is not just a record of the solution. It is a record of how the problem was approached. Multiple agents, human and AI, can work simultaneously on different branches of the same tree. Each contribution fits naturally into a final solution.

The problem tree also preserves something that experts accumulate over careers and that rarely gets documented: failure knowledge. The dead ends – approaches that seemed promising but turned out to be wrong - are some of the most valuable pieces of knowledge. Normally, that knowledge dies with the expert who learned it the hard way. The problem tree captures it.

Working directly with Herb Simon helped me understand his universal problem-solving framework. When I filed a patent for Online Distributed Problem Solving back in 1999, I was translating that theory into a system in which multiple solvers could pool their collective intelligence to solve problems on a network. Later, when I built PredictWallStreet, using a very simplified version of the same architecture, I tested whether this collective intelligence approach could outperform human experts. By 2018, we had the answer. The collective intelligence of millions of everyday investors had powered a top-ten hedge fund performance, beating most of Wall Street’s top traders.

This was not primarily a finance story. Rather, it demonstrated that a fifty-year-old theoretical insight, embodied in a collective intelligence system, could outperform the most sophisticated human traders in an extremely competitive domain. The designs described in our current whitepapers extend that result, showing how collective intelligence represents a faster and more profitable path to AGI and SuperIntelligence. But most important in the race to faster, more powerful AI is AI safety.

In the next post, we look at the safety mechanism embedded at every decision point in our architecture, and why the fastest path to AGI and SuperIntelligence can be. and must also be the safest path.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see how it all works.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.