Scalable Safety Checks

Three checks, embedded in every decision, scaling at the speed of thought.

Today, most AI safety work focuses on detecting safety issues after they occur. What if we could prevent safety issues from arising in the first place?

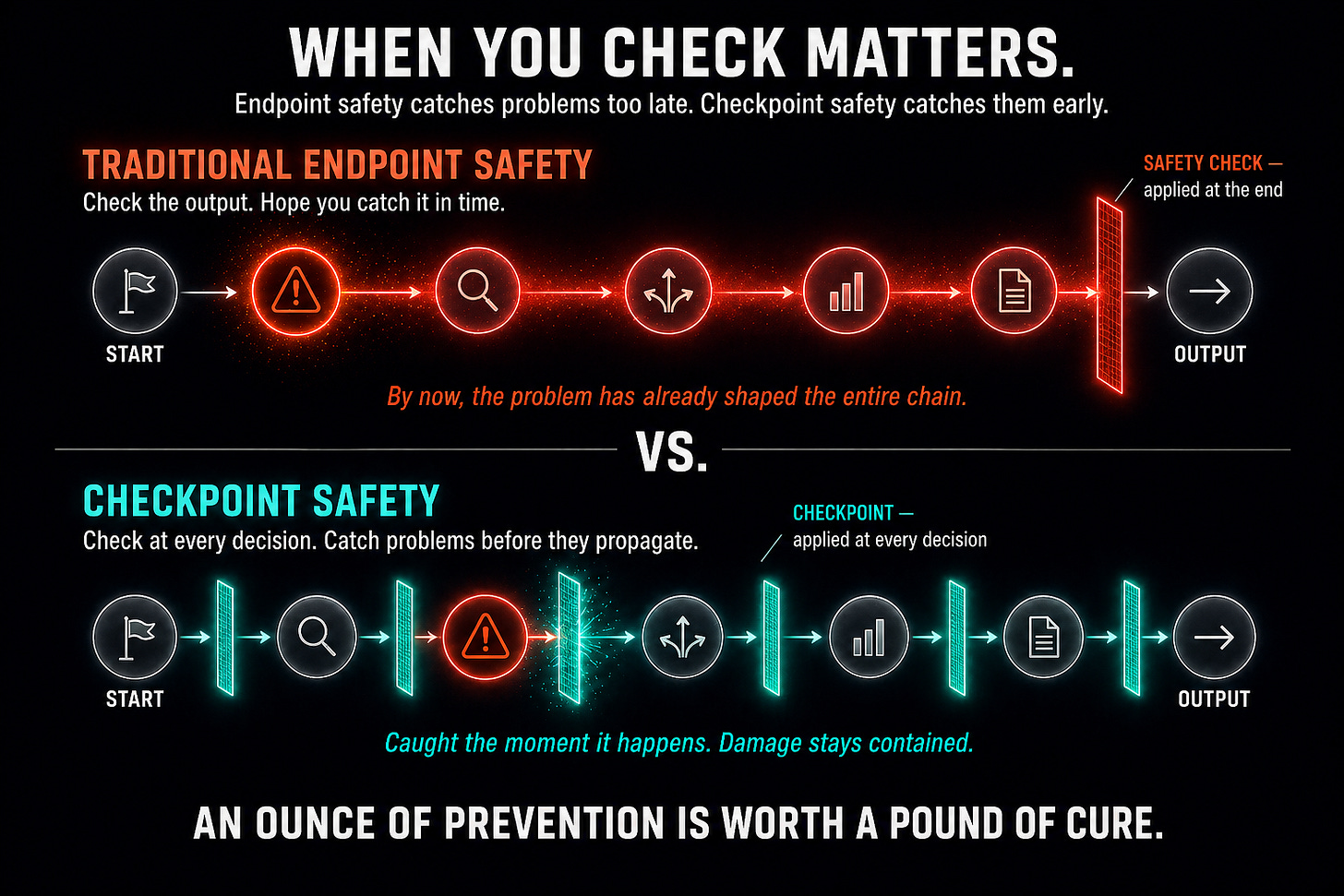

Most AI safety approaches follow the same logic. Build the system. Let it run. Check the output at the end. If the output is acceptable, it goes through. If not, the system tries to block or fix the problem before it can cause too much damage. This seems reasonable until you realize that detecting problems at the end of the process is far more expensive and dangerous than preventing them from arising in the first place. As the saying goes: “An ounce of prevention is worth a pound of cure.”

Consider that an intelligent system working toward a goal does not simply generate a result. It makes thousands of intermediate decisions along the way: which sub-problems to pursue, which actions to take, which approaches to abandon. Each of those decisions shapes what comes next. By the time the final output arrives, the decision that led to a problematic outcome may have been made many steps earlier, in a form that looked perfectly innocent at the time. Endpoint safety checking provides no visibility into any of that.

This is a structural weakness. You can make the output filter more sophisticated, but you can only weed out a few of the dangerous results this way.

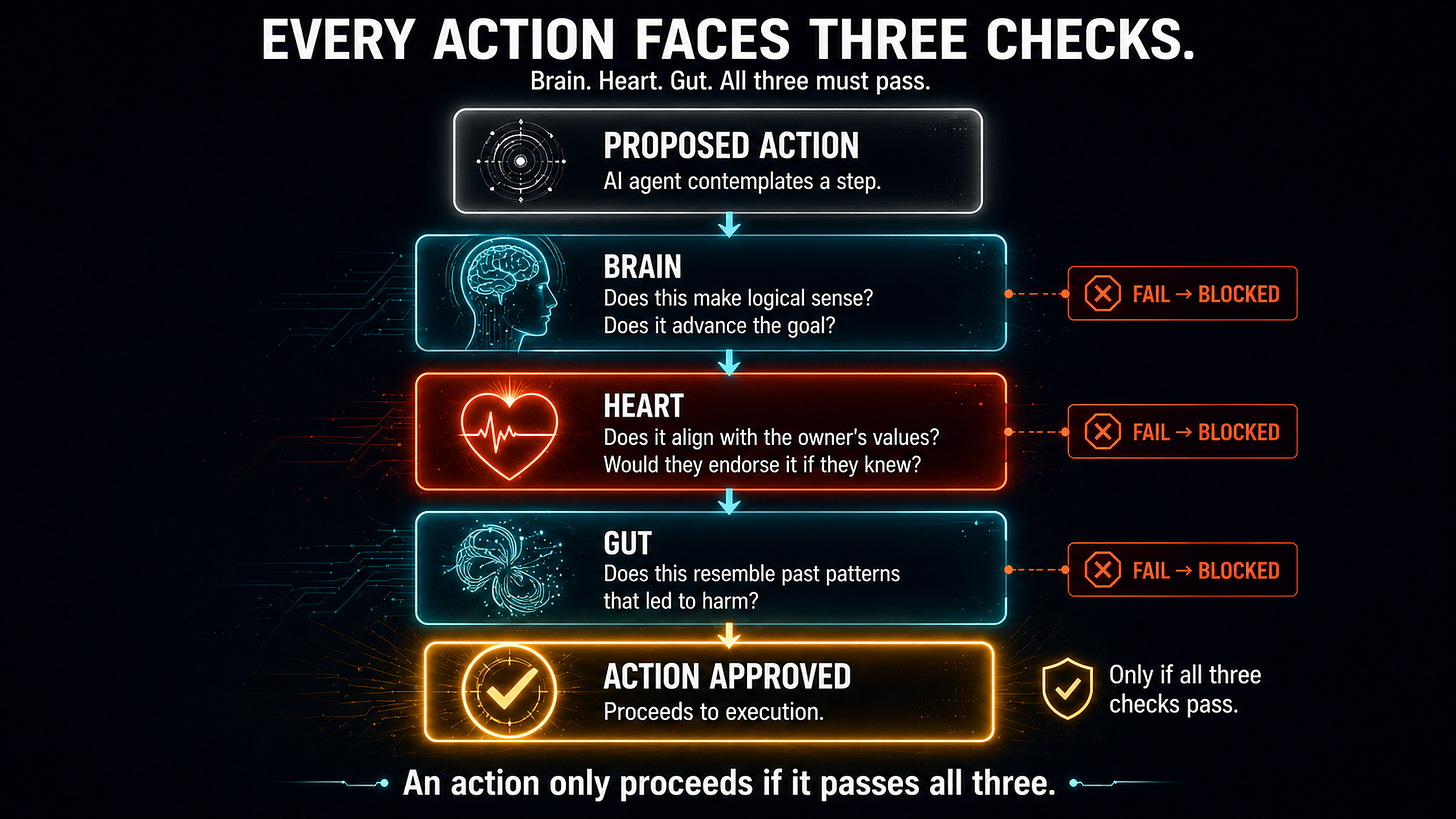

The AAAI architecture addresses this flaw by running safety checks at every decision point, not just at the end. To determine how to do the safety checks, we created a mechanism inspired by a best practice in human management and adapted it for AI. Before any significant action, three checks are applied. In humans, these checks are called the Three-Organ Test.

The first is the Brain test.

It asks whether a proposed action makes logical sense. Does it advance the goal? Are the expected consequences consistent with the stated purpose? This is the rational layer. It filters out actions that are simply irrational, that would consume resources without moving toward any useful outcome. A sufficiently capable system could pursue a wide range of internally consistent yet destructive goals. The Brain test does not catch ethical problems; it catches logical ones.

The second is the Heart test.

It asks whether the proposed action aligns with the values of the AI agent’s owner. Every AI agent was customized (see Posts 4 and 5) with its owner’s specific ethical commitments. The Heart test cross-references proposed decisions against those embedded values. Would the owner endorse this choice? Would this action be acceptable if everyone knew it was being taken? If the action conflicts with the owner’s ethics, the Heart test fails. Because millions of AAAIs on the network each carry their owner’s values, the aggregate effect is the enforcement of collective ethical standards. These standards reflect global human diversity rather than the preferences of a small team of AI researchers or engineers.

The third is the Gut test.

It asks whether anything about the proposed action resembles situations that have gone wrong before, even if no specific rule is being violated. In humans, gut instinct often reflects pattern recognition operating below conscious awareness, a sense that the current situation looks like past situations that ended badly. In the AAAI system, the Gut test compares the proposed action against a database of known problem scenarios. It catches novel situations that do not trigger any explicit rule, but which resemble situations that have caused harm before. This safety layer helps address situations that have never been seen before.

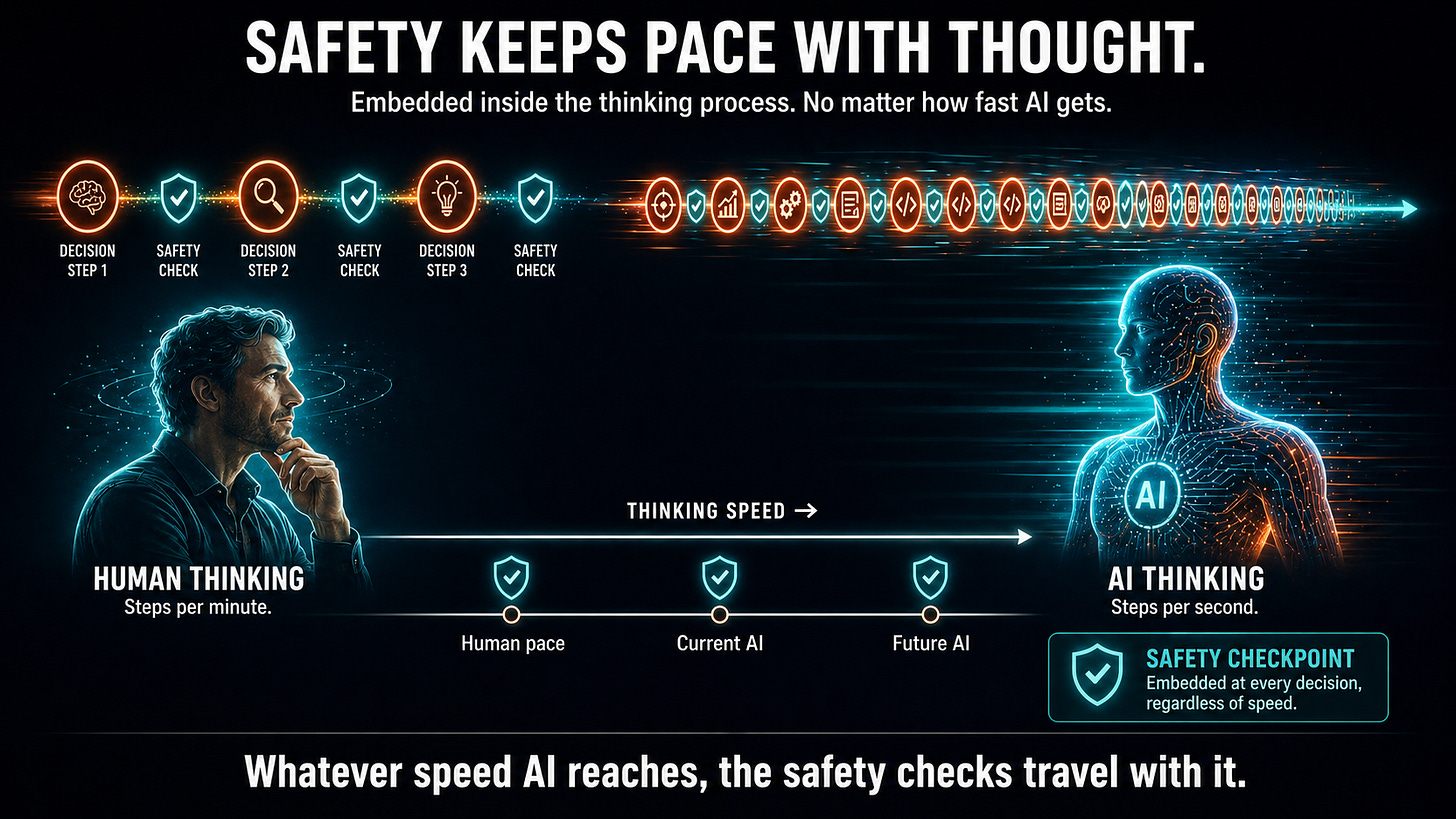

All three tests can be run at every significant decision point throughout the problem-solving process. A possible solution path gets flagged the moment its trajectory becomes concerning, not after the harm is done. Critically, since AI agents are thinking faster and faster and will soon outstrip humans’ ability to keep up, safety checks can be embedded scalably within the thinking process itself. For example, every time AI agents set a goal or subgoal, or contemplate an action, these goals or actions can be checked to see whether they meet safety and ethical standards. This means that no matter how fast AIs think, safety checks are scaling at the speed of their thought.

There is a network-level effect also worth understanding. When one agent’s Heart test flags an action that another agent would approve, the inconsistency triggers conflict resolution mechanisms. Over time, through the interaction of many agents’ individual ethical standards, the network develops a collective ethical intelligence. No single authority imposes it. It emerges from the actions of many agents working on real problems, including disagreement and conflict resolution.

Finally, an important property of our design is that safety checks, such as the Three-Organ Test, are not just filters at the end of the process. Rather, they are part of a cognitive architecture that enables problem-solving. The design makes it difficult to remove the checks without also impairing problem-solving ability.

In the next post, we describe other aspects of the system architecture, including why using a reputation system within a marketplace can be one of the most reliable safety mechanisms available.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see exactly how it all works.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.