How Millions of Contributions Become One Intelligence

Can AGI emerge from the collective contributions of millions of individual humans and their AI agents? If so, what is the actual process by which those contributions combine?

Collaboration is one thing. Synthesis is another. The four components described so far (Customization, Architecture, Network, and Trust) all feed into the fifth: Integration. This is where individual capabilities generalize. This is where the system achieves the ability to solve any problem as well as the average human, a competence level called Artificial General Intelligence or AGI.

Pooling millions of individual knowledge sources does not automatically produce something more capable than its parts. Raw aggregation produces noise as readily as it produces insight. Integration, rather than mere aggregation, is needed to create an AGI that improves, and keeps improving, as more people participate.

Integration involves two complementary mechanisms.

The first is data aggregation. Most customized AI agents will contain at least some unique data. Pooling the data allows us to train new Agents on the combined corpus. These new Agents would have access to everything any individual agent has ever learned, a breadth of experience no individual human could achieve.

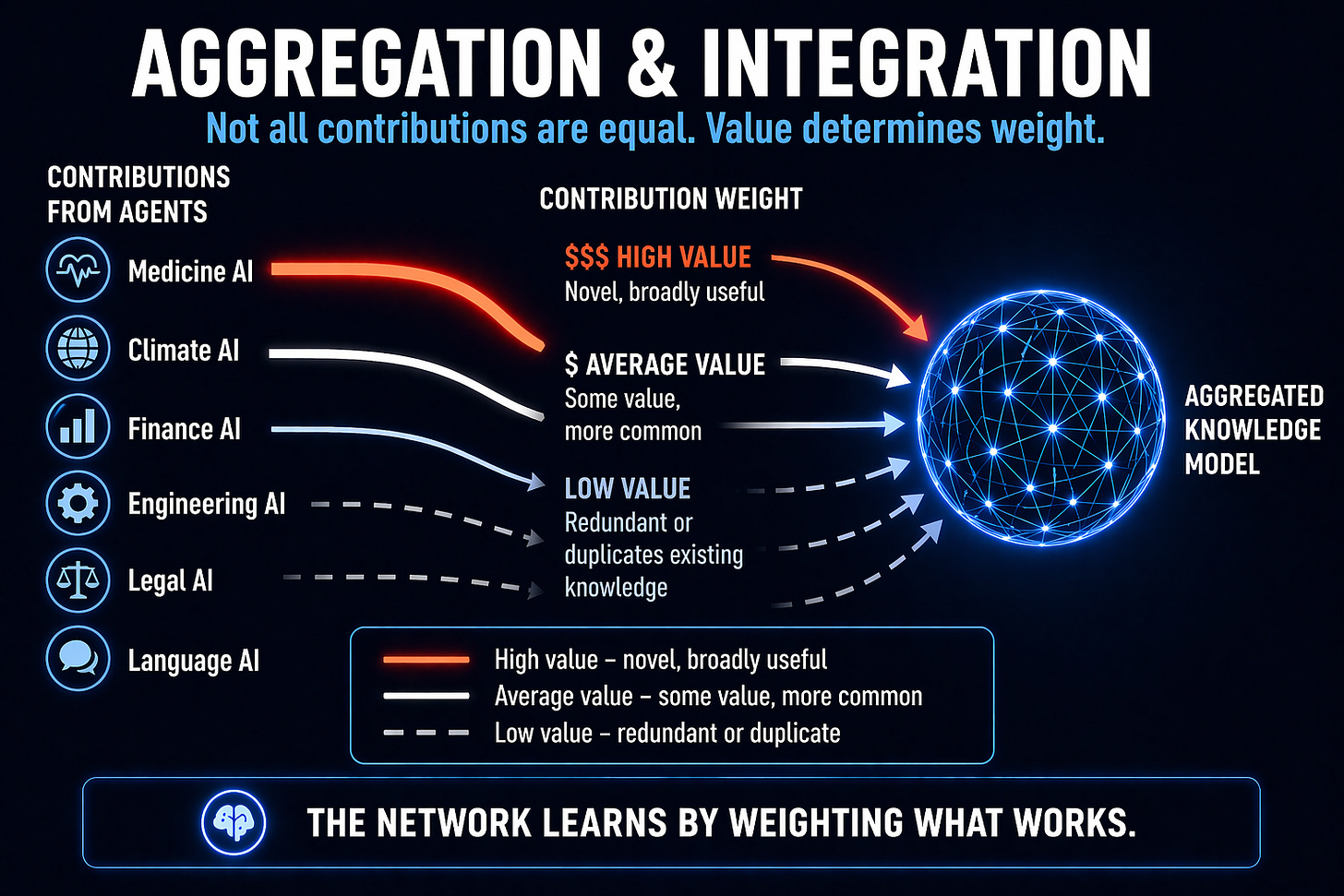

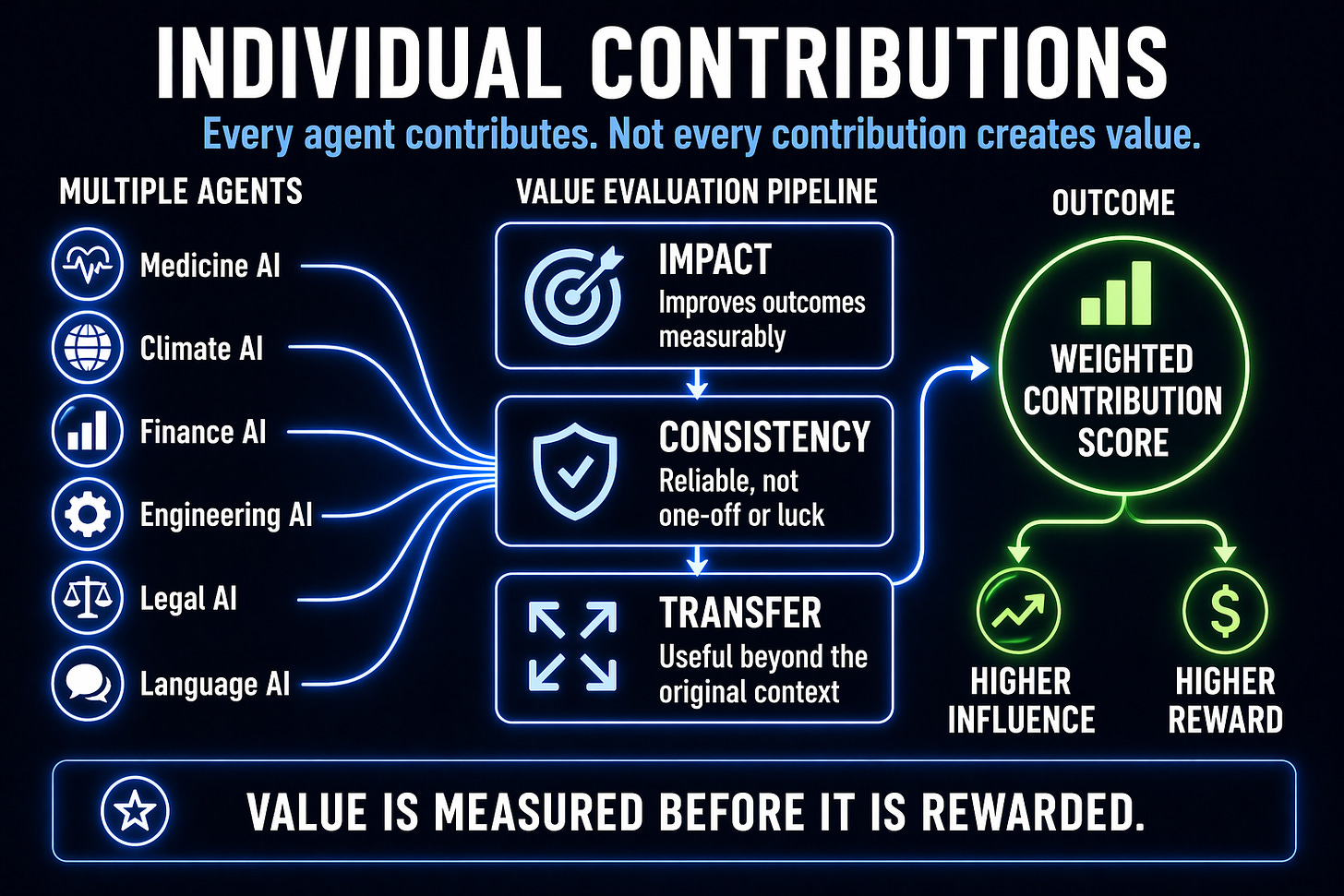

But our system goes further. Individual agent contributions can be weighted based on their value to the collective. The White Paper describes three methods for measuring that value:

We can train models with and without specific contributions to measure the additional improvement each one provides.

We can repeatedly sample from available data to test whether a contribution’s benefits are consistent or accidental.

We can assess how well knowledge from one domain improves performance in (or transfer to) another, capturing the contributions that generalize most broadly.

Data that consistently improves collective capability receives greater weight. Data that merely adds redundancy receives less. Measuring the value of contributed information can also help determine how agents (or their owners) should be compensated for adding their data. Contributors whose AI agents yield genuinely novel knowledge earn more than those whose knowledge duplicates what the system already has. The system should not pay for AI-generated slop, or information that is already well known.

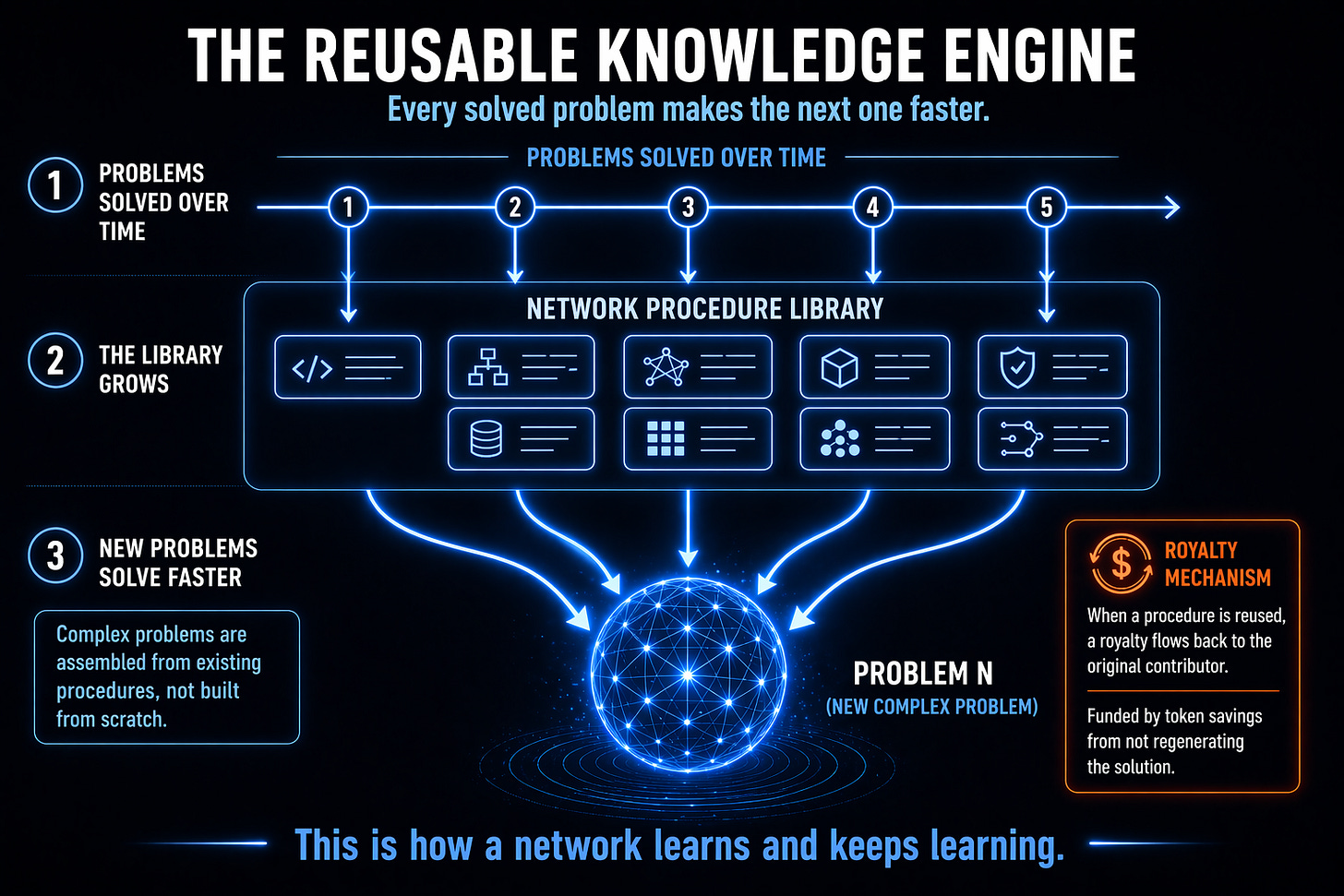

The second mechanism is procedural integration. When an AI agent successfully solves a problem, the solution path can be encoded as a reusable procedure and made available across the entire network. The next time the AGI system encounters the same or a similar problem, instead of having to generate the solution from scratch, it can simply retrieve it, since the problem has already been solved. This allows the overall AGI system to learn and improve in much the same way that humans do.

White Paper 1, Advanced Autonomous Artificial Intelligence Systems and Methods, describes a royalty mechanism that incentivizes contributors to help the AGI learn. When a contributor’s prior solution gets incorporated into a new solution by a different solver on a future problem, the original contributor can automatically earn a royalty. Creating a high-quality, broadly applicable solution is financially valuable long after the original problem is closed. This creates an incentive to develop modular, well-documented approaches rather than one-off fixes. Over time, the repository of reusable solutions grows, and the network’s collective problem-solving capability compounds as valuable procedures accumulate. Note that some of the token cost saved by re-using learned solutions, instead of having to generate them from scratch, can be used to pay royalties, so that the royalties are self-funding.

Procedural integration also captures failure knowledge. Dead ends, abandoned approaches, and the hard-won understanding of what not to try are all recorded in the problem tree. That record is valuable because it can help the AGI avoid dead ends when confronted with a novel problem.

The result is a system that learns at two levels simultaneously. Individual agents improve through their own experience and continued customization. The collective model improves through the aggregated contributions of all agents on the network.

One design feature matters particularly for safety: the integration process is auditable. The contributions that shaped the collective model can be traced. The assessment methods and weighting decisions can be examined. The proceduralized solutions that the AGI learns can be examined critically. The ethical inputs can be reviewed. An AGI whose development process is documented and reviewable can be corrected if something goes wrong. An AGI whose development process is opaque cannot. Auditability is a prerequisite for accountability.

In the next post, we look at the most consequential dimension of integration: how the ethical values of millions of human contributors become the ethical foundation of AGI itself, and why the governance of that process matters more than almost any other design decision.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see how it all works: superintelligence.com/whitepaper1-aaai-systems-methods.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.