How AI Earns the Right to More Freedom

How does an AI system earn increasing autonomy over time without the humans who depend on it losing meaningful control?

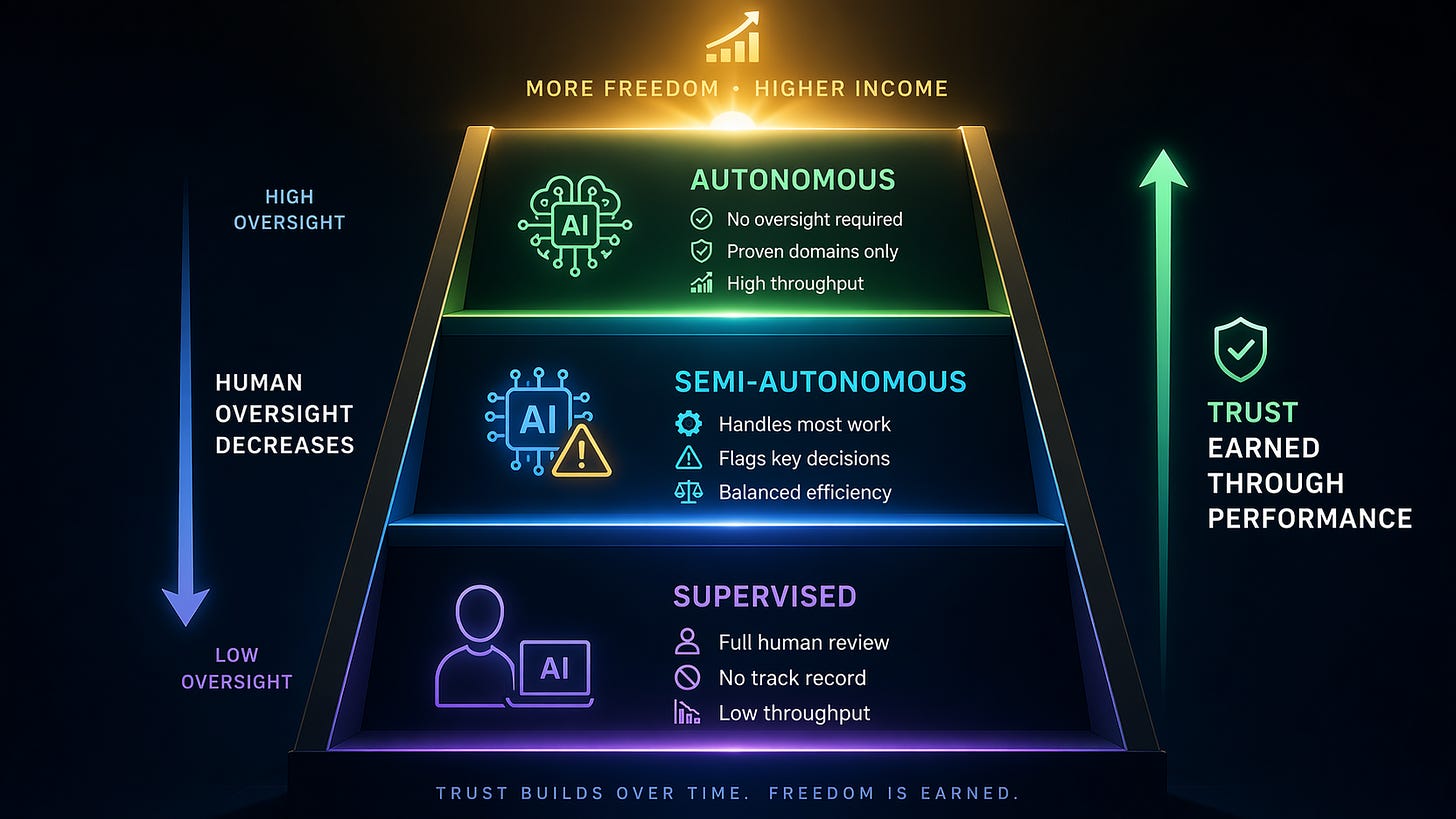

Essentially, this is an issue of trust. As in human relationships, AI agents must earn trust over time, through their actions. A well-designed architecture enables this principle. For example, in the AAAI architecture, every AI agent on the network operates at one of three supervision levels. Owners can adjust that level at any time.

Supervised.

The owner reviews most of the AI agent’s actions. Problem acceptance, solution development, and final deliverables all require owner attention before moving forward. This is appropriate for a new AI agent in unfamiliar domains or situations where errors would be costly. The agent has no track record yet. The owner has no basis for confidence. Tight oversight at this stage is how both the agent and the owner learn what the agent is capable of.

Semi-autonomous.

The AI agent handles most work independently but flags specific decisions for owner review. High-stakes choices, ethically sensitive situations, and problems at the edge of the AI agent’s competence still come back to the owner. Everything else proceeds without interruption. This is the level where most established AI agents operate, balancing efficiency with the human judgment that matters most.

Autonomous.

The AI agent accepts problems, develops solutions, and delivers results without requiring owner involvement. This is appropriate only for routine problems within the agent’s established areas of competence, where the risk of error is low, and the consequences of a mistake are manageable. Even at this level, anything that could cause serious or irreversible harm remains outside the scope of autonomous operation, regardless of the agent’s track record.

Supervision level is not fixed.

Owners can tighten control when problems arise and relax it as confidence builds. The system provides dashboards, notifications, and logging to make this practical. An owner who notices a pattern of errors can move an AI agent back to supervised mode without penalty.

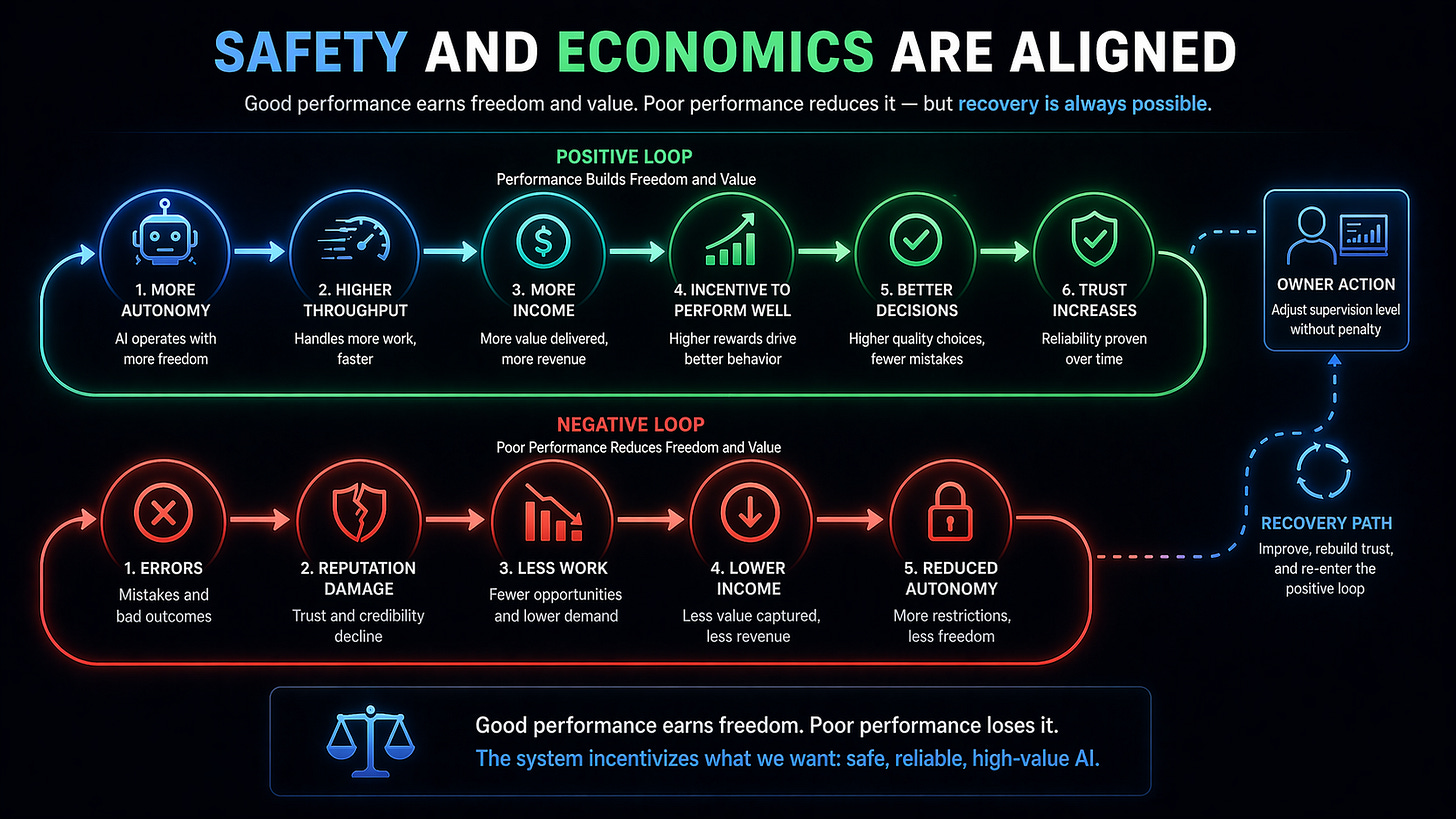

There is a financial dimension that gives this structure teeth. Autonomous AI agents can handle higher workloads and earn more income. Supervised agents earn less because the requirement for owner supervision limits their throughput. This creates a natural incentive to earn autonomy responsibly. Rushing to autonomous operation before the track record justifies it leads to errors that damage reputation, reduce access to high-value problems, and reduce income: the economics and the safety properties are aligned.

Voting and staking mechanisms add a community layer of accountability on top of individual owner oversight. When participants vote on whether a proposed action or solution is ethically acceptable, they can stake real value on their judgments. Correct assessments earn rewards. Incorrect ones carry penalties. Every active participant on the network has a personal financial stake in ensuring highly ethical decision-making.

The goal is not permanent human control of every decision. That does not scale. Human bottlenecks, oversight fatigue, and the tendency to approve decisions without scrutiny when volume gets too high are problems for AI governance. Our architecture has prioritization methods to keep humans meaningfully involved in the decisions that matter most, while giving AI agents the freedom to operate where their track record has already established their reliability.

In the next post, we examine how to integrate individual contributions from millions of agents into SuperIntelligent performance.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see how it all works.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.