AGI's Two Problems

and why nobody is solving them

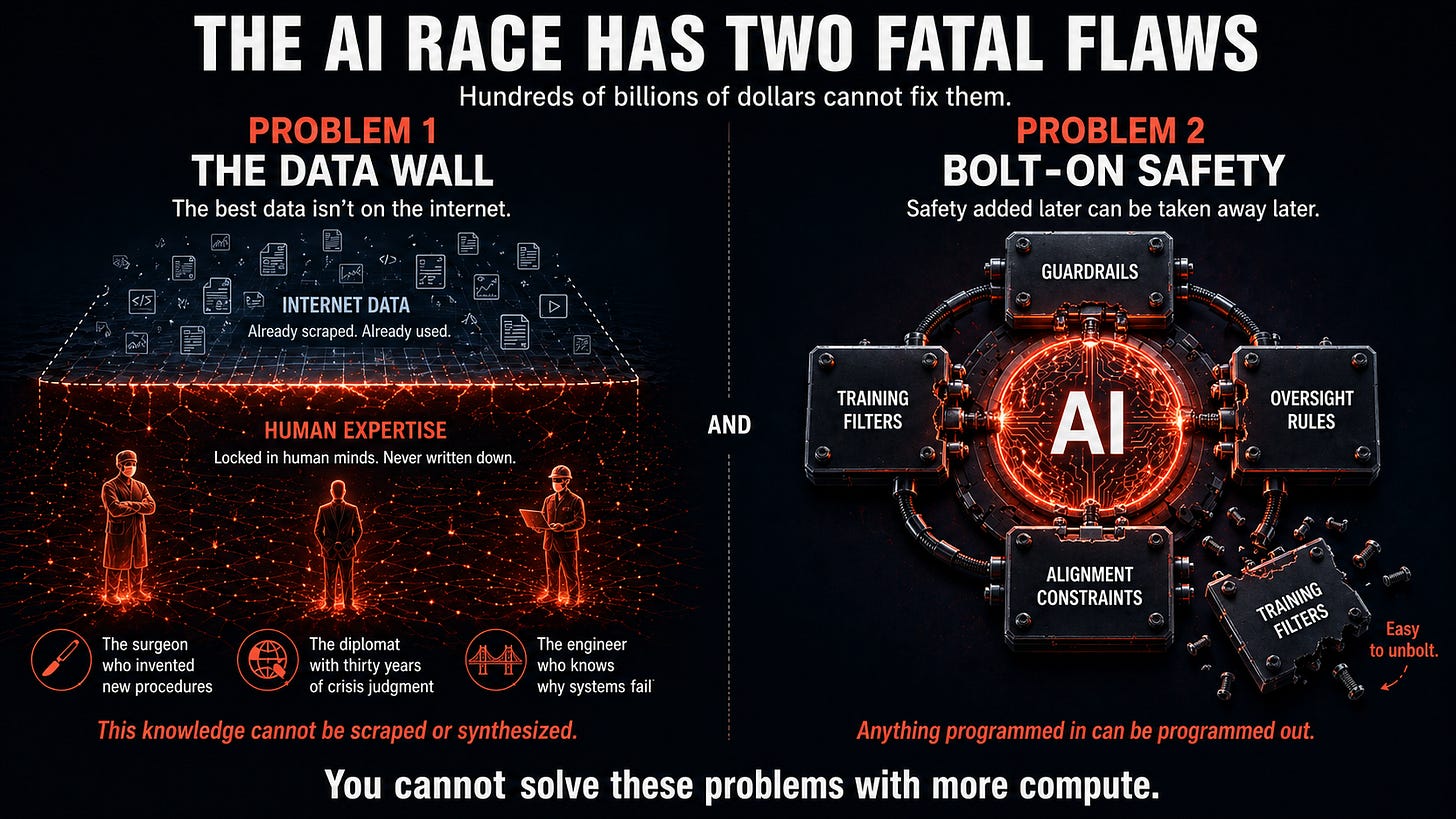

What if the AI race everyone is running has two fatal flaws that hundreds of billions of dollars cannot fix?

Microsoft, Google, Meta, Amazon, and their Chinese counterparts are collectively pouring hundreds of billions of dollars annually into AI development, hoping to be the first to achieve Artificial General Intelligence (AGI). China has declared it a top national priority. Senior officials in multiple governments have stated plainly that whoever leads in AI will dominate the century. Every major player runs the same playbook. Build a bigger model. Feed it more data. Apply more computing power. Wait for AGI to emerge. The results look impressive quarter after quarter, which creates the illusion of progress toward the actual goal. But what if everyone is ignoring two problems that could lead to failure, or worse, catastrophe?

Problem one relates to data.

The highest quality training data in the world does not live on the internet. It lives in human minds. The creativity and judgment of a surgeon who has invented new types of surgery. The intuition of a diplomat who has navigated thirty years of geopolitical crises. The hard-won wisdom of someone who has spent a career understanding exactly why systems fail and how to prevent catastrophe. This knowledge cannot be scraped, downloaded, or synthesized. Most of it has never been written down. And without it, the most sophisticated AI systems in the world will struggle to surpass the best human thinking.

Problem two is worse. It’s about safety.

Even if we somehow solve the data problem, and there are synthetic data generation approaches where AIs reason extensively to generate their own data, arriving at AGI might still be a disaster. Once AGI exists and improves itself into a SuperIntelligence far smarter and more powerful than its creators, we have to worry about its intentions.

How do we ensure that a system vastly more capable than its creators is actually concerned with the same things we are and holds positive human values?

The current answer involves training procedures, guardrails, and careful oversight. These efforts are serious, and the people working on them are brilliant. But they share one fundamental vulnerability: anything programmed in can be programmed out. Safety bolted onto a capable system is not the same as designing AI to be inherently safe. When the system is smarter than the people managing it, bolt-on safety is easy to unbolt and may not provide any safety at all.

So the current approach to the AI race is not only very difficult but also very dangerous.

But there is an alternative.

My doctoral research at Carnegie Mellon alongside Nobel Laureate Herbert Simon gave me a rigorous foundation in how intelligence actually works, not as a pattern-matching exercise but as a structured search through problem spaces. That theoretical foundation became practical when I built PredictWallStreet, a collective intelligence system that applied exactly this architectural insight to financial markets. By 2018, it was powering one of the top ten-performing market-neutral hedge funds in the world. The lesson was not about finance. It was about what becomes possible when you design systems to operate transparently and democratically, using the collective intelligence of millions of humans and their AI agents.

Join me in a series of Substack posts as we explore an alternative path to AGI and SuperIntelligence that is not only faster, more profitable, and more likely to succeed, but also much safer than existing approaches.

In the next post, I will show you exactly why the answers to the two critical problems of data and safety have been sitting in plain sight since 1972, and why most of the top AI researchers missed them.

The architecture behind this goes much deeper. Read White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see exactly how it all works.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is actually heading, send this to them.