A World Powered by Safe Democratic SuperIntelligence

What would it be like to live in a world powered by Safe, Democratic SuperIntelligence?

This post challenges us to imagine a new world, powered by safe AI, in which the opportunities and benefits far outweigh the risks that we have (prudently) discussed in previous posts.

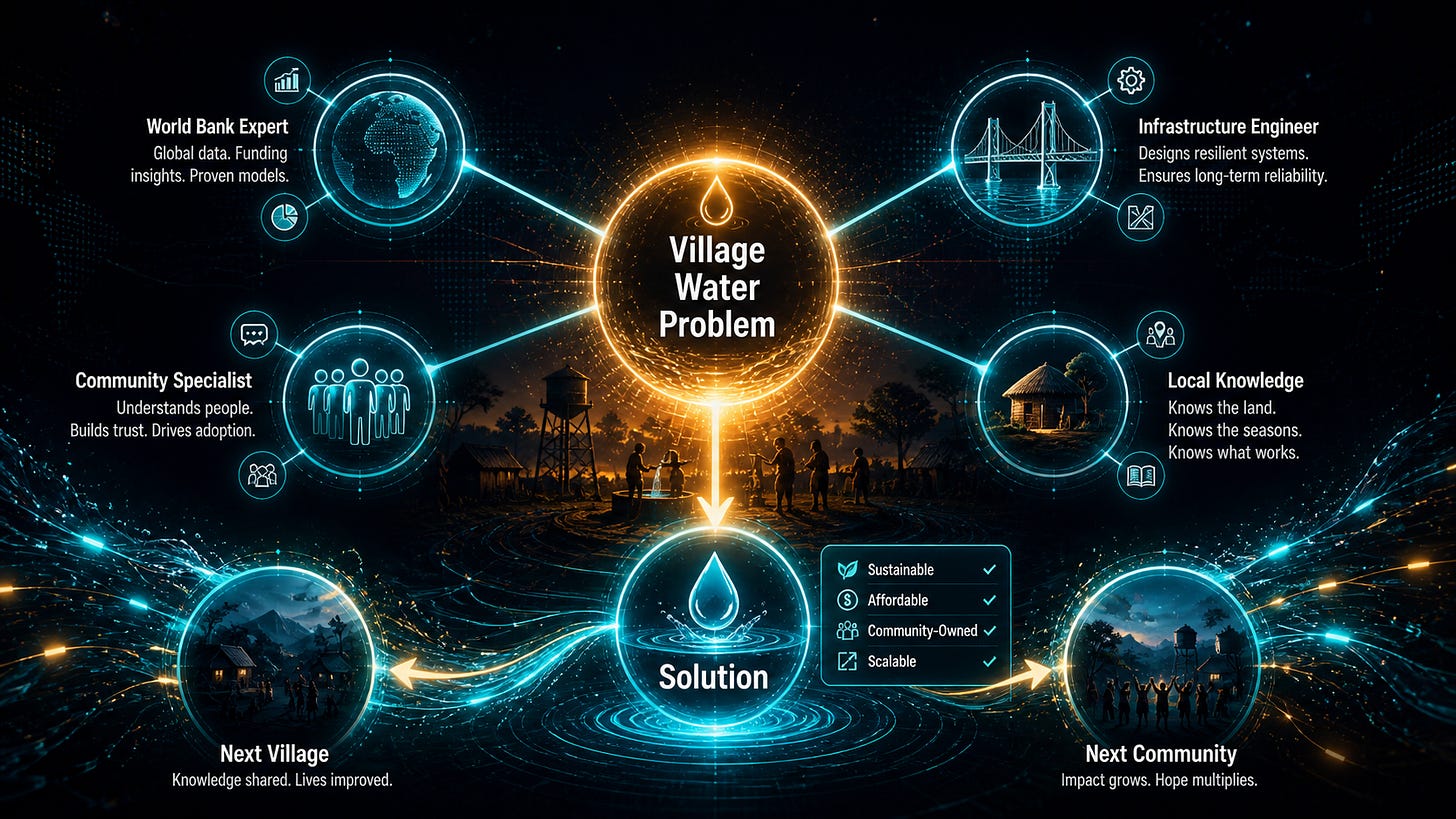

Consider the village water system example from White Paper 1, Advanced Autonomous Artificial Intelligence Systems and Methods. In that example, a development organization submits a problem to the network: design a plan to bring clean water to a specific village in central Africa.

The network brings together specialists with the required knowledge – a World Bank development expert, an infrastructure engineer, a community engagement specialist, and a local who knows the village politics.

Each contributes by working on pieces of the problem captured in a shared “problem tree.”

Each earns compensation.

The village gets clean water.

The solution path, once developed, becomes a reusable procedure that helps the next community facing the same challenge.

When the solution is fully or partially reused, it costs less and deploys faster because the hard-won knowledge does not have to be rediscovered from scratch.

Royalties might even be paid (e.g., via blockchain-based contracts) to some of the original problem solvers who made inventive contributions that can be reused.

Now scale this basic problem-solving approach by several orders of magnitude.

There are problems that humanity has failed to solve, not because they are technically impossible, but because the knowledge required to solve them exists in scattered pieces that have never been assembled. Dealing with climate change (or even regulating Earth’s climate) requires integrating climate science with local agriculture, cultural practices, and economic constraints specific to each affected community.

Healthcare in low-income countries can improve by reusing best practices and solutions developed by SuperIntelligence. Educational solutions that used to exist in isolated practitioners’ heads can be reused globally at low cost.

Problems that might have been beyond the reach of even large institutions can be solved more easily, reusably, and scalably via a democratic SuperIntelligent system.

There is also a personal dimension.

White Paper 1, Advanced Autonomous Artificial Intelligence Systems and Methods, describes a scenario in which a human (Jean) discovers and corrects an ethical error made by his AI agent.

Jean wanted to take his small dog with him on a plane trip from San Francisco to Paris. Jean’s AI agent, not yet trained on animal welfare, suggested placing the pet in the overhead bin.

Jean caught the mistake, corrected it, and that correction became part of the training data for other AI agents.

By capturing the knowledge from humans like Jean, the ethical insight that animals require different treatment from inanimate objects can be propagated across the entire network.

Other AI agents that had never been trained on pet travel questions become more ethically calibrated through Jean’s attention and ethical corrections.

This is the personal counterpart to the water system example.

Expertise accumulated over a lifetime of practice largely dies with the person who built it.

The surgeon who developed new techniques retires, taking most of what she learned with her.

The engineer who spent forty years understanding why a particular type of system fails passes that knowledge to a few direct colleagues, and then it is gone.

The customization process described in Post 4: Your AI Should Think Like You and Post 5: Your Expertise Has a Market Value is, among other things, a mechanism for preserving and sharing knowledge that would otherwise be lost.

In the past, knowledge could be passed on by mentorship or writing a book.

But with SuperIntelligence, the simple act of solving problems automatically trains the AI and reuses solutions across similar problems.

Scaled across millions of contributors, this represents a fundamentally different relationship between human expertise and the collective knowledge available to humanity.

There is also a democratic dimension that matters deeply.

The AGI we build will have more influence over the future of human civilization than any previous technology. Humans are primarily responsible for the values it carries, and the knowledge it incorporates. I believe the best way to seed SuperIntelligence with a positive, representative set of human values and knowledge is to ensure the design is inherently democratic. An AGI built through democratic participation, where millions of people contribute their knowledge and values through a transparent and auditable process, will be far from perfect. But it is likely to be a much safer and more robust system than one based on the values and expertise of just a few humans whose motivations may not align with those of the broader population.

In the next post, I want to talk about timing, specifically, why the architectural choices being made right now are more consequential than they might appear, and why the time window for effective action is much shorter than most people realize.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see how it all works.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.