A Fork in the Road

At what point in the development of a transformative technology do the architectural choices become locked in – and are we already past that point for AGI?

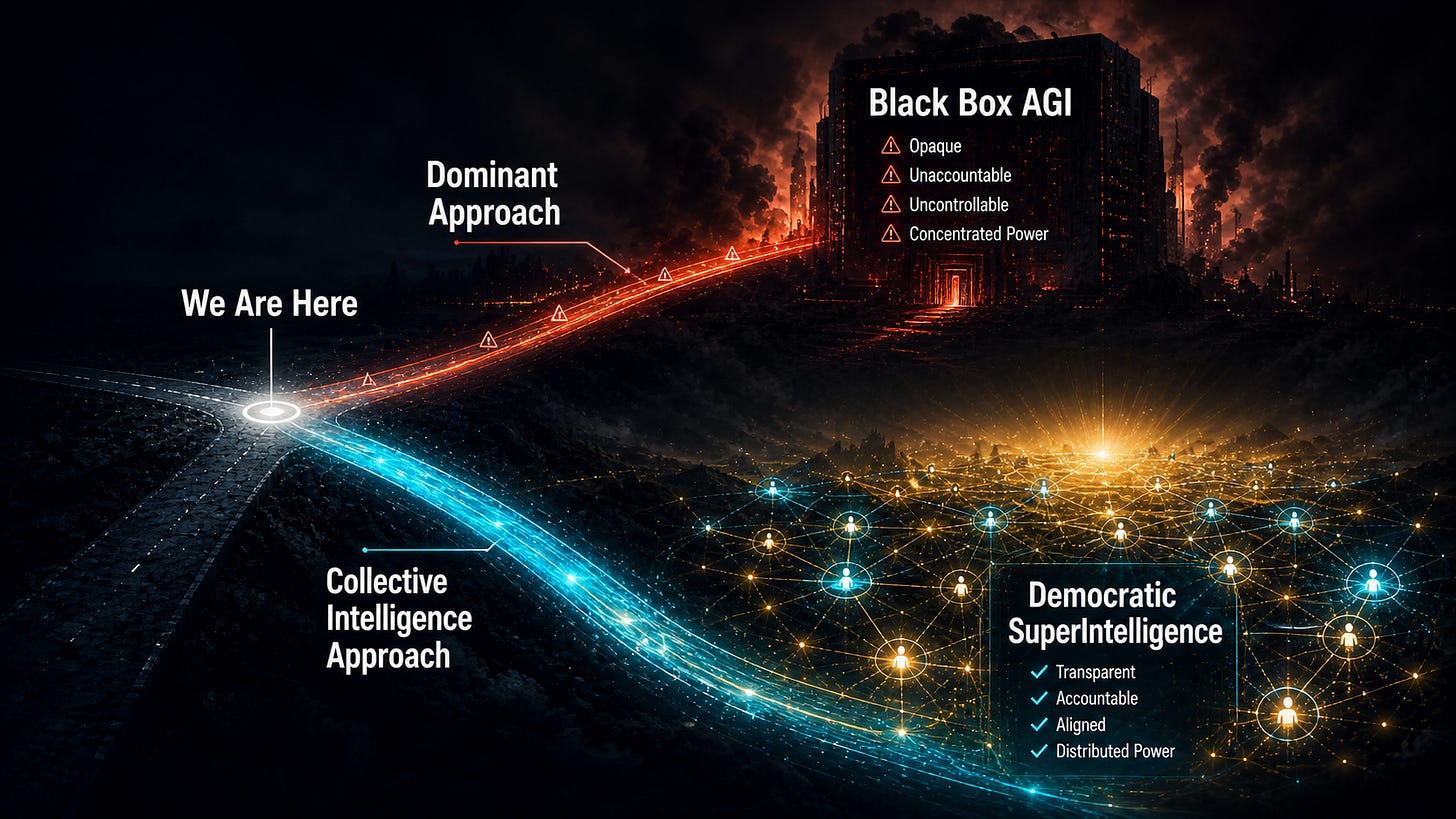

We are closer to a point of no return than most people realize. Soon, the systems, incentives, and infrastructure built around the dominant approach to AGI development will become self-reinforcing enough that fundamental architectural change will become extremely difficult and therefore unlikely.

Technologies follow this pattern regularly. Early in a technology’s development, many architectural approaches compete. The choices made in this period, often by a relatively small number of actors making tactical decisions, can lock in a path that affects the entire world. For example, early automobile infrastructure was built around gasoline engines, even though electric vehicles were initially a competitive alternative. However, once fuel stations, supply chains, and engineering expertise accumulated around internal combustion, the cost of switching became prohibitive for decades. The window for a different architecture closed not with a dramatic announcement but gradually, as each incremental commitment made reversal harder.

We may be in a similar period regarding AGI development right now.

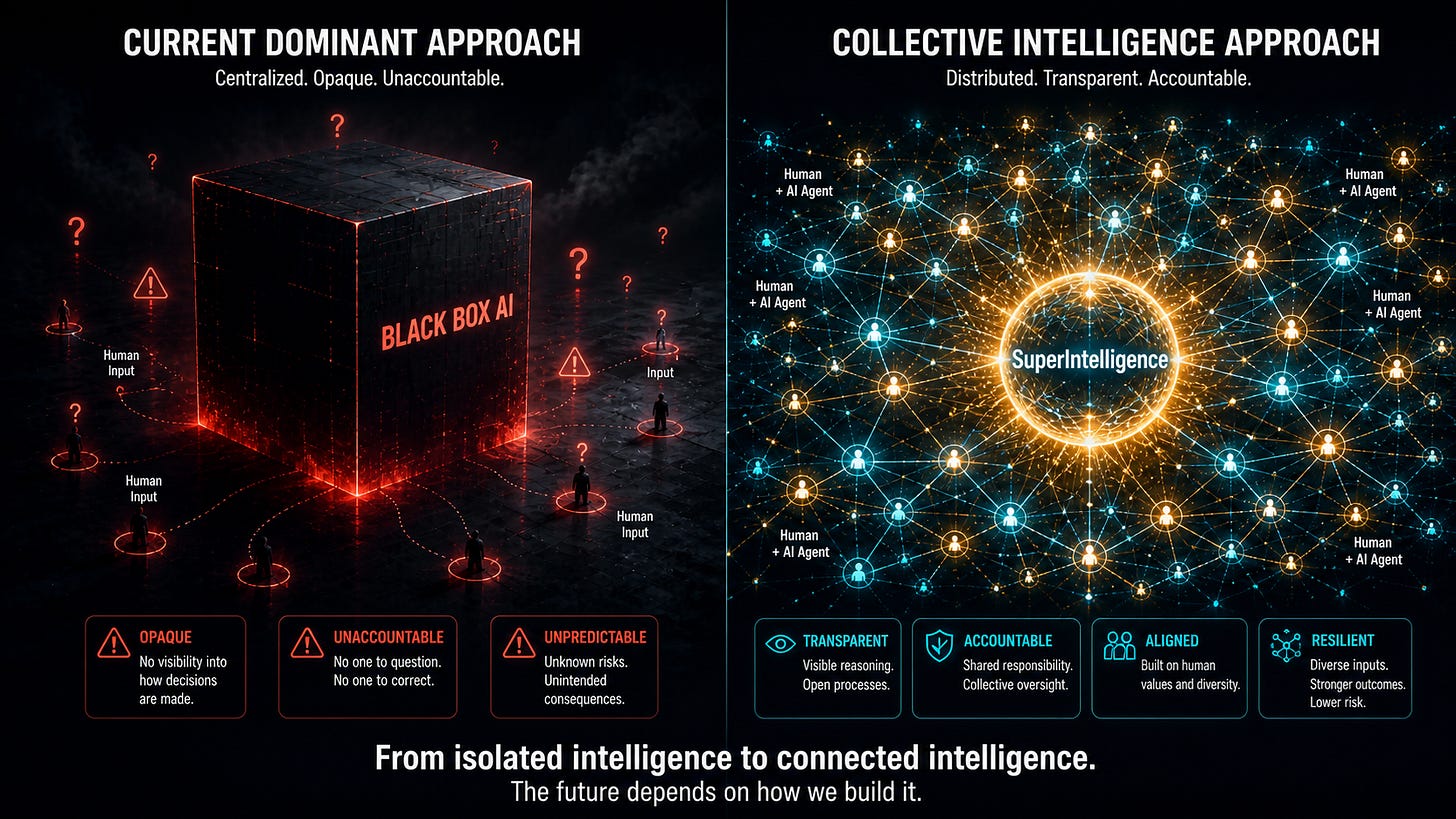

The current dominant approach – scaling large models, treating safety as a separate problem, and keeping most humans outside of the training process – is attracting most of the investment, talent, and institutional commitment in the field.

This does not mean it will necessarily succeed on its own terms. It means that as each year passes, the infrastructure, incentives, and careers built around this approach make it harder to redirect resources toward genuinely different architectures.

We should all be concerned because the dominant approach treats AI safety as something to be fixed as an afterthought rather than built into AI systems from the beginning.

And to the degree that there is focus on “alignment” with human values, the current approach is to align AI with values determined by a small group of AI researchers rather than to design a system that incorporates everyone’s values democratically (even though they can and will conflict).

I am confident that the collective intelligence of millions of humans, combined with their AI agents, can power a SuperIntelligence that is not only smarter and more profitable, but also safer and more democratically aligned than existing approaches. My confidence stems from building working collective intelligence systems for the highly competitive financial services industry. Designing systems in which the collective intelligence of millions of everyday investors powered top-ten hedge fund performance convinced me that the same approach could be adapted to build SuperIntelligence based on the collective intelligence of millions of humans and billions of their customized AI agents. Currently, collective intelligence architecture is like an electric car in an era where everyone is racing to build polluting gasoline engines. While the recent realization of the importance of AI agents is encouraging, many billions are being poured into the less safe approach of building huge “black box” AIs that are increasingly powerful and unpredictable.

Many industry observers believe it will take a large catastrophe for people to wake up to the inherent dangers in this approach.

I am hopeful that logic and reason will convince the technology leaders of the benefits – not only in terms of safety but also in terms of speed and profitability – of a collective intelligence-of-agents approach.

Detailed designs for this democratic approach to advanced AI and SuperIntelligence are available, free to all who are interested in building safer and more powerful AI systems, on SuperIntelligence.com.

The theoretical foundations were built by Allen Newell and Herbert Simon, two of the pioneers who helped name the field of AI. Simon was both a Nobel Laureate and a Turing Award winner. Their work, expanded by iQ Company’s designs and published work on online distributed problem-solving, provides a blueprint for building such systems. The practical validation comes from PredictWallStreet, where my team spent two decades building and proving the effectiveness of similar systems in the most competitive conditions we could find.

What is needed now is adoption and implementation at scale. There is still time to pursue a safer, more effective architecture, but the window is closing rapidly.

A slowdown in the AI race is unlikely. Investment by both governments and private companies reflects the belief that whoever leads in AI will shape the century. But the race must be directed toward an approach in which winning and being safe are features of the same design. Now is the time!

Next, for the final post of this White Paper 1 series, I want to talk directly to you about what your participation in this system would mean, what it would require, and what it could make possible.

For AI researchers who want details of the approach, we recommend starting with White Paper 1: Advanced Autonomous Artificial Intelligence Systems and Methods to see how it all works. And stay tuned for White Paper 2: Ethical and Safe AGI.

If this made you think, subscribe to Superintelligence at read.superintelligence.com so you don’t miss what comes next. And if someone in your life needs to understand where AI is heading, send this to them.